Research Help in 2026: Upgrade From AI Shortcut to Real Advantage

Welcome to the world of research help in 2025—where the only thing moving faster than your deadlines is the data avalanche. Forget those cozy academic workshops from pre-pandemic days, with their worn-out frameworks and bullet-pointed “research best practices.” Today, if you aren’t evolving, you’re drowning. Whether you’re a team leader at a multinational, an independent journalist, or a grad student caught between plagiarism software and AI hallucinations, research help has become brutally complex, cutthroat, and, yes, more rewarding—if you master the new rules. This isn’t about finding “sources”; it’s about hacking through information chaos, leveraging next-gen AI, and sidestepping pitfalls that can tank your credibility (or your entire project). Here’s the unapologetic, research-verified guide to not just surviving but dominating modern research in 2025.

Why classic research advice is failing you now

The problem with old-school research methods

If you’re still clinging to the research rituals you learned in 2010, brace yourself for disappointment. The digital landscape has mutated. Vast tracts of information once considered goldmines are now digital wastelands—outdated, echo-chamber-ridden, or manipulated by algorithmic incentive. According to the World Economic Forum’s Future of Jobs Report 2025, a staggering 44% of workers’ core skills have changed, and research is at the epicenter of this realignment (World Economic Forum, 2025). Classic literature reviews and manual citation dogma crumble under the weight of “data overload.” This is more than just a productivity killer. It’s a credibility risk.

The sheer volume of available data is both a blessing and a curse. Traditional methods—think slow, linear database trawling—collapse under the pressure of exponential information growth. The result: missed insights, cognitive fatigue, and the silent killer of all research projects—decision paralysis.

"If you research like it's 2010, you're already lost." — Maya, digital research strategist

The introduction of AI and the democratization of machine-learning tools have shifted the research ground rules. In a world where AI adoption in research is growing at 33% year on year (National University, 2025), manual-only workflows aren’t just inefficient—they’re career suicide.

Red flags that your research workflow is stuck in the past:

- You rely on a handful of favorite databases and rarely explore new sources.

- Your “fact-checking” is still mostly manual.

- You avoid or distrust AI-powered tools, believing they’re “unreliable.”

- Most of your research process is linear, not iterative or collaborative.

- You spend hours reformatting citations or summarizing findings by hand.

If any of these sound familiar, it’s time to overhaul your research toolbox—urgently.

The rise of AI-powered research teammates

Gone are the days when “automating research” meant running a few keyword searches or using a citation manager. Today, AI-enabled research help solutions like intelligent enterprise teammates (integrated into platforms such as futurecoworker.ai) can parse thousands of documents in seconds, surface hidden patterns, and even predict which findings are most likely to impact your field.

But don’t confuse automation with true AI collaboration. Automation speeds up repetitive tasks; AI collaboration transforms the research journey by combining human intuition with machine speed, surfacing insights that neither could reveal alone.

| Workflow Element | Manual Approach | AI-Augmented Approach | Key Risks (Manual) |

|---|---|---|---|

| Source Identification | Manual database search | Semantic AI search, context analysis | Missed sources |

| Data Extraction | Copy-paste, manual notes | Automated, contextual summarization | Human error, fatigue |

| Analysis | Manual synthesis | Pattern recognition, anomaly detection | Cognitive overload |

| Citation Management | Manual formatting | Automated, dynamic updates | Formatting mistakes |

| Quality Control | Peer review | AI cross-validation, human oversight | Bias, oversight lapses |

Table 1: Comparative breakdown of manual vs. AI-augmented research workflows. Source: Original analysis based on World Economic Forum, National University (2025).

The first time a distributed team used an intelligent enterprise teammate, the difference wasn’t just speed. It was revelation. Suddenly, cross-departmental insights emerged, data silos shattered, and bottlenecks evaporated. What used to take a week—a comprehensive literature review, synthesis, and action plan—now happens before the second coffee break.

Real-world consequences: When old advice goes wrong

Case in point: a global product launch derailed by reliance on outdated market research methods. The team, wedded to old-school survey databases and manual spreadsheet crunching, missed a major shift in consumer sentiment. The blowback? Cost overruns, missed KPIs, and a reputational hit that took months to recover from.

Hidden costs pile up: lost time, staff burnout, and, most insidious of all, acting on obsolete or misinterpreted data. According to StartUs Insights, 2025, the research help industry is growing at 21.5% annually, driven by the urgent need for up-to-date, AI-empowered workflows.

"The real risk is acting on yesterday’s facts." — Alex, enterprise research lead

Here’s what could have been done differently: By leveraging AI to automatically synthesize fresh market data and validate sources in real-time, the team could have pivoted instantly, saving millions and protecting market reputation. Modern research help isn’t just about efficiency—it’s about survival.

Decoding the new research workflow: 2025 edition

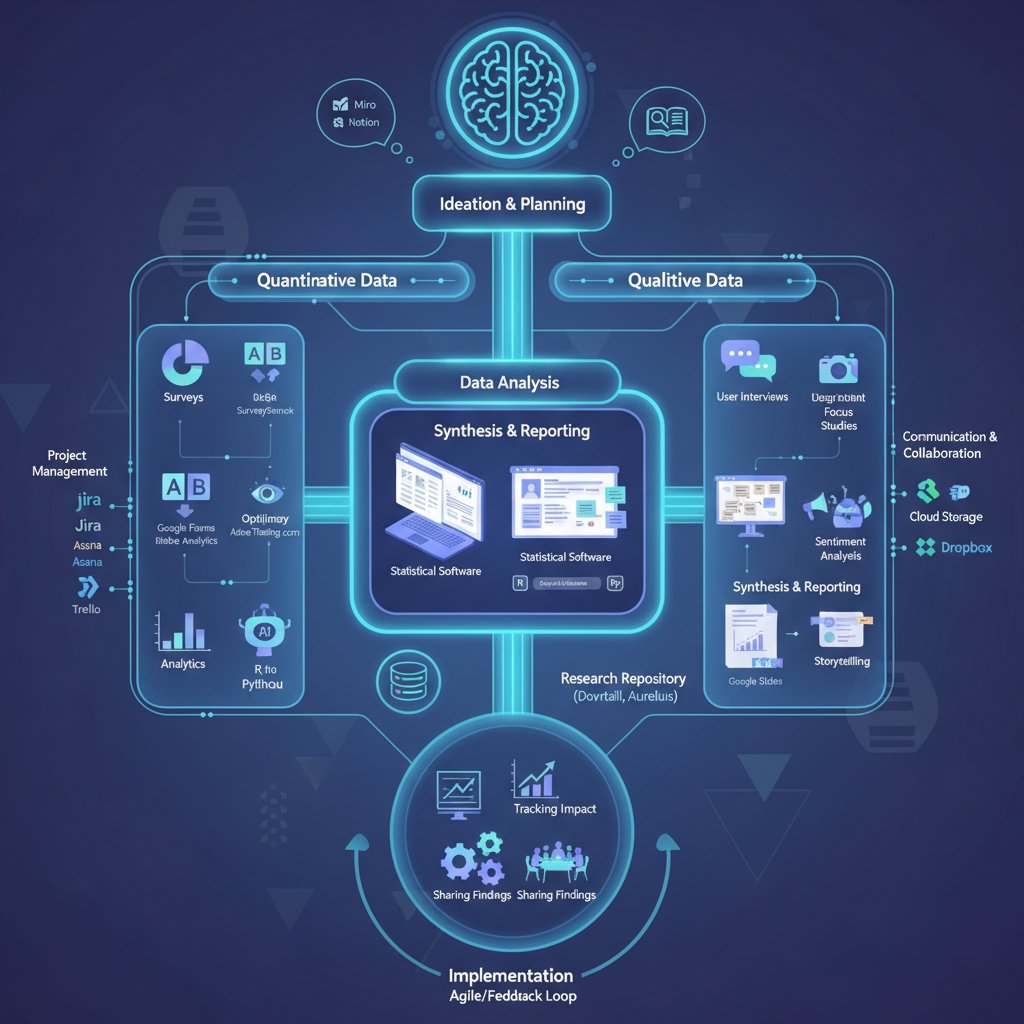

Mapping the modern research journey step by step

Welcome to the new research journey, where each step is a tactical move in a high-stakes information war. Forget the slow grind—here’s how next-gen research help actually works.

- Define your question with context. Use AI teammates to clarify objectives, highlight assumptions, and suggest broader angles.

- Source discovery via semantic AI. Tap into advanced search tools that crawl not just databases, but forums, preprints, and live data feeds.

- Rapid source validation. AI cross-checks credibility, flags potential bias, and highlights peer-reviewed status.

- Automated summarization and initial synthesis. Machine learning models deliver concise abstracts, isolating actionable insights.

- Human insight injection. Researchers interrogate the AI findings, add context, and perform “gut checks” for plausibility.

- Collaborative review. Team members (and their AI agents) discuss, annotate, and challenge findings—iteration is the new norm.

- Actionable reporting. AI generates dynamic reports, replete with links to verified sources and real-time updates.

- Continuous learning. Every project feeds back into the system, refining both human and AI performance.

Tips for each workflow stage:

- At sourcing, prioritize diversity—don’t just rely on one database.

- During validation, always cross-reference at least two sources before acting.

- Inject human intuition before making high-stakes decisions.

- Use dynamic reporting tools with live links and auto-updates to keep stakeholders informed.

Human vs. AI: Who does what best?

Here’s where the new research help paradigm gets interesting: you don’t have to pick a side. Research shows that the best outcomes happen when human researchers and AI teammates play to their strengths.

| Task | Best Handled By Humans | Best Handled By AI | Best Handled By Both |

|---|---|---|---|

| Problem framing | Contextual intuition, nuance | Context crawling, data mining | Hybrid brainstorming |

| Source credibility assessment | Bias detection, ethical judgment | Link validation, fact-checking | Final review, edge-case identification |

| Data extraction | Discernment of subtle cues | Bulk processing, summarization | Quality assurance |

| Insight generation | Creativity, lateral thinking | Pattern recognition, clustering | Synthesis, scenario planning |

| Communication & reporting | Narrative, storytelling | Automated updates, formatting | Mixed media reports, real-time dashboards |

Table 2: Human vs. AI division of labor in modern research help. Source: Original analysis based on World Economic Forum, StartUs Insights (2025).

Hybrid research in enterprise settings is no longer optional. For example, a finance team may rely on AI to parse thousands of SEC filings overnight and then task human analysts to identify anomalies with business significance. In academic research, AI proposes hypotheses based on literature trends, while scholars validate and contextualize results.

Tips for deciding when to trust AI vs. human judgment:

- Use AI to handle scale and speed—never to make final judgments in ambiguous or high-impact cases.

- Human oversight is crucial for ethics, narrative framing, and edge-case interpretation.

- Routinely audit both human and AI decisions for bias and oversight.

Spotlight: Collaborative research in action

Let’s get concrete. Picture a multinational marketing team using an AI-powered enterprise teammate to launch a global campaign. The process starts with the AI surfacing real-time consumer sentiment data from 12 countries. Human team members identify cultural nuances, then iterate campaign angles within hours—not weeks.

Hidden benefits of collaborative research help experts won’t tell you:

- Network effects: Every team member’s input trains the AI for future projects.

- Reduced knowledge silos: Cross-team insights flow instantly via shared AI channels.

- Continuous improvement: Feedback loops mean the system gets sharper with every iteration.

Results speak. According to recent case studies, collaborative teams using research help platforms like futurecoworker.ai deliver projects up to 25% faster, with fewer errors and higher stakeholder satisfaction compared to legacy teams.

Tools, tactics, and tech: Making research help work for you

Choosing the right research tools in a crowded market

Between 2024 and 2025, the research help tools market exploded. There’s a tool for every niche, but the signal-to-noise ratio is brutal. Choosing the right solution isn’t about picking what’s trendy—it’s about matching features to your workflow and risk tolerance.

| Solution | Email Task Automation | AI Summarization | Collaboration | Security | Integration | Ease of Use |

|---|---|---|---|---|---|---|

| futurecoworker.ai | Yes | Yes | Full | High | Seamless | No training |

| Competitor A | Limited | Partial | Basic | Medium | Difficult | Complex |

| Competitor B | No | Yes | Limited | High | Partial | High effort |

| Competitor C | Partial | No | Partial | Medium | Medium | Moderate |

Table 3: Feature matrix comparing top research help solutions in 2025. Source: Original analysis.

What to look for based on your needs:

- Security: End-to-end encryption and compliance with enterprise standards.

- Integration: Does it plug into your existing comms and project stack?

- Flexibility: Can you customize workflows, or are you locked into templates?

- Collaboration: True shared workspaces—or just file sharing?

Don’t settle for generic “all-in-one” promises. Vet each tool with a trial project, and talk to teams already using it.

Building your research stack: Essentials for every workflow

Every modern research workflow stands on three pillars: access, analysis, and collaboration. Foundational tool categories include:

- Advanced databases (not just academic—think news, government, preprints)

- AI-powered analysis platforms

- Real-time communication hubs (Slack, Teams, or integrated AI-driven email like futurecoworker.ai)

Unconventional uses for research help tools:

- Rapid due diligence on companies or people (beyond LinkedIn).

- Live fact-checking during interviews or presentations.

- Tracking emerging themes across social media and news in real time.

- Automating grant or funding proposal research.

- Running “what-if” simulations on policy or market changes.

Efficiency peaks when you combine these tools in a custom stack. For instance, feed AI-summarized research notes directly into collaborative dashboards, where team members annotate, review, and iterate. Layer on APIs for automated data pulls, or set up custom automations (e.g., trigger alerts when a citation is retracted).

Advanced integrations, like connecting your AI teammate with project management platforms or bespoke analytics dashboards, can slash research cycle times further. The key? Don’t just use more tools—make your tools talk to each other.

Time-saving hacks and common pitfalls

Every researcher’s nightmare is spending hours on repetitive tasks that yield marginal returns. Here’s how to sidestep that fate:

- Centralize source validation. Use dashboards that auto-check source credibility and peer-review status.

- Automate routine synthesis. Let AI handle first drafts of summaries and literature reviews.

- Template everything. From report structures to email updates, standardize for instant deployment.

- Schedule “AI/HI handoffs.” Build set points in your workflow where humans review and contextualize AI findings.

- Document everything. Keep an audit trail of research decisions—this isn’t just CYA, it’s best practice.

Priority checklist for implementing research help efficiently:

- Inventory your current tools and workflows.

- Identify redundancies and bottlenecks.

- Trial AI-augmented solutions in low-risk projects first.

- Gather team feedback and iterate.

- Gradually scale up, prioritizing custom integrations.

Common mistakes? Failing to cross-reference AI-generated insights. Over-trusting a single tool’s recommendations. Ignoring team training. Spot them early by setting up regular workflow audits and feedback loops.

"Sometimes the fastest path is the one you don’t see." — Jamie, enterprise research manager

Myths, misconceptions, and the hard realities of research help

Top 5 research help myths debunked

Misconceptions about research help persist for a reason—they sound reassuring. But in 2025, these myths are costly.

Myth #1: AI will replace human researchers.

- Reality: AI amplifies human capabilities; it doesn’t replace critical thinking or ethical judgment.

Myth #2: More data means better outcomes.

- Reality: Without context and curation, more data often leads to confusion and bias.

Myth #3: Research tools are only for academics.

- Reality: Industry, journalism, activism—all require up-to-date, agile research help.

Myth #4: Automation equals inaccuracy.

- Reality: Properly configured AI can outmatch humans in accuracy for routine tasks—but only with regular oversight.

Myth #5: You can “set and forget” your research stack.

- Reality: Constant iteration is vital; tools and data sources evolve rapidly.

Evidence and examples: According to ManTech Publications’ 2025 analysis, digital literacy—including AI tool proficiency—is now a baseline skill across sectors, not just in academia (ManTech Publications, 2025).

Key terms and their true meaning:

Any process, tool, or practice aimed at improving how you find, analyze, and act on information—across industries.

An intelligent digital agent that collaborates on research tasks, providing both automation and contextual insight.

The state where information quantity exceeds your processing capacity, breeding decision paralysis.

Systematic checking of a source’s credibility, accuracy, and relevance—often via a combination of AI algorithms and human review.

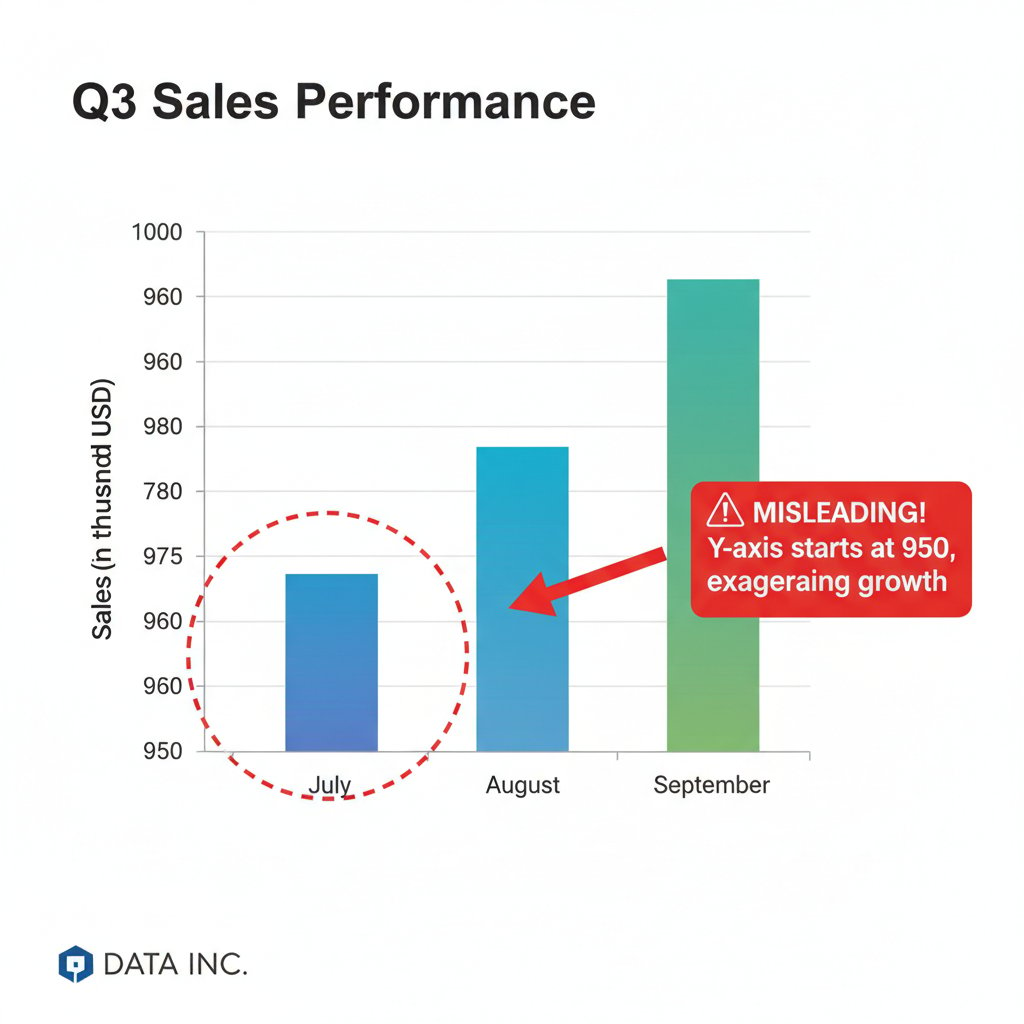

The dangers of confirmation bias and AI hallucination

As more teams rely on AI for research help, two risks are surging: confirmation bias (only seeing what you expect) and AI hallucination (machines generating plausible-sounding nonsense).

Why are these risks rising in 2025? The sheer scale of automated research means more room for subtle errors—and more pressure to take AI outputs at face value. Add information bubbles and rapid news cycles, and the danger of acting on faulty “facts” intensifies.

How to spot and mitigate them:

- Set up regular “devil’s advocate” reviews by team members.

- Use AI-explainability features to trace sources and logic.

- Cross-check any critical finding against at least two independent sources.

- Encourage open reporting of discrepancies—reward people who challenge consensus.

The consequences? Misinformed business decisions, flawed academic papers, or—at worst—public misinformation campaigns. The stakes have never been higher, and vigilance is non-negotiable.

What your boss, professor, or client really wants

The expectations game in research help is ruthless. Business leaders want actionable, ROI-driven insights. Professors demand rigor, citation credibility, and original thought. Journalistic stakeholders crave fast, fact-checked stories that won’t unravel under scrutiny.

Bridging this expectation gap requires brutal honesty and clear communication. Present not just the “what” but the “why” and “how” behind your findings. Use evidence, not jargon. For example: when presenting AI-generated insights, always clarify methodology and source limitations.

"The best research tells a story—don’t just drop data." — Priya, investigative journalist

Mastering research help across industries: Tactics for every field

How enterprises streamline research for speed and accuracy

Enterprise research workflows have mutated dramatically since 2010. In today’s high-stakes environment, speed and accuracy are non-negotiable. Multinational teams now deploy AI-powered email-based coworkers to synchronize insights across borders and time zones.

Timeline of enterprise research help evolution (2010-2025):

- 2010—Manual research, siloed teams, heavy reliance on static databases.

- 2015—Adoption of keyword-based search tools, early automation of citation management.

- 2020—Rise of cloud-based collaboration and real-time document sharing.

- 2023—Emergence of AI teammates for summarization, validation, and alerting.

- 2025—Full integration of intelligent email-based research help, seamless cross-platform collaboration.

Metrics that matter? ROI (projects completed per researcher per year), turnaround time (hours to insight), and error rates (frequency of retraction or correction). Enterprise teams using modern stacks report up to 40% higher productivity and 30% fewer post-publication corrections.

Academic research: Surviving and thriving with new tools

Academic researchers face their own gauntlet: skyrocketing publication volume, stricter plagiarism checks, and skepticism about AI influence. Students and faculty use research help tools differently—students for speed and breadth, faculty for depth and rigor.

Academic pitfalls:

- Plagiarism traps: Over-reliance on AI-generated text without proper citation.

- Source credibility: Not all “peer-reviewed” journals are created equal—AI can help flag predatory publishers.

- Ethical gray areas: Using AI to generate hypotheses is fine; faking data is not.

Tips for responsible AI use:

- Always cross-verify AI-proposed sources with manual checks.

- Use plagiarism detection as both defense and feedback tool.

- Document your research process for transparency.

Research help for journalism, activism, and beyond

Non-traditional researchers—journalists, activists, citizen scientists—are some of the most innovative adopters of research help tools. They use them to track misinformation, organize leaks, or spot emerging trends in real time.

Unconventional uses in the field:

- Investigative journalists running AI-powered sentiment analysis on press releases.

- Activists mapping social media narratives to identify coordinated disinformation.

- Policy analysts automating fact-checks during live debates.

Ethical considerations loom large: using research help tools responsibly means verifying, contextualizing, and—crucially—disclosing your methods. A standout example: a recent investigative journalism project used AI-driven research platforms to verify whistleblower documents in days, not weeks, enabling a timely, high-impact exposé.

From overwhelmed to unstoppable: Building your research literacy

Self-assessment: Are you a research dinosaur?

It’s time to confront the uncomfortable truth. Are you evolving, or are you the last of your kind? Take this self-assessment:

Signs you need to update your research approach:

- You haven’t piloted a new research tool in over a year.

- You’re still manually reformatting citations.

- You avoid AI-powered platforms “because they’re too new.”

- You rarely collaborate across departments or disciplines.

- You find yourself overwhelmed by information more often than not.

If you checked two or more, it’s time for a toolkit overhaul. Don’t take it personally—take it seriously.

How to upskill and future-proof your research game

Continuous learning isn’t an option in research—it’s a mandate. Here’s how to level up, step by step:

- Audit your research workflow and identify outdated habits.

- List new platforms or tools to pilot (aim for at least one per quarter).

- Sign up for reputable newsletters or forums (e.g., futurecoworker.ai).

- Attend online workshops or webinars—look for ones that offer hands-on trial periods.

- Collaborate with peers on at least one AI-assisted research project.

Tips for staying ahead:

- Bookmark sites that review and compare research help tools.

- Set calendar reminders to reevaluate your workflow every six months.

- Use platforms like futurecoworker.ai to find peer-led learning communities and upskilling resources.

Key takeaways: What really matters for 2025 and beyond

Synthesizing all these strands, here’s the bottom line: research help today is about agility, critical thinking, and relentless learning. It’s about blending AI muscle with human creativity and ethical rigor.

Actionable next steps:

- Inventory your tools and ditch the dead weight.

- Schedule time weekly to pilot or learn new platforms.

- Build a “research circle” of peers from different disciplines.

- Prioritize transparency and repeatability in your process.

The essential truths about research help:

- More data isn’t always better—context is king.

- AI is a teammate, not a replacement.

- Collaboration trumps isolation.

- Ethics must be built in, not bolted on.

- Research literacy is your edge—protect it.

The future of research help: Trends, predictions, and ethical questions

Emerging trends: What’s changing in research help?

As AI gets smarter and teams more distributed, research help is transforming. Collaborative AI agents, real-time data synthesis, and dynamic dashboards now blur the lines between human and machine.

Predictions for the next five years (grounded in current trends):

- Human-AI collaboration will be the norm in every discipline.

- Research literacy will become a core requirement for hiring and promotion.

- Ethical oversight and explainability will matter as much as speed or accuracy.

| Year | Key Milestone | Description |

|---|---|---|

| 2020 | Cloud-based research tools rise | Real-time document sharing becomes widespread |

| 2023 | AI teammates in the enterprise | AI handles summarization, validation, and alerting |

| 2025 | AI-driven research workflow | Human-AI collaboration in all phases of research help |

Table 4: Timeline of research help milestones (2020–2025). Source: Original analysis based on StartUs Insights, World Economic Forum.

What should you watch for? Platforms that combine transparency, speed, and ethical rigor. The ability to audit every AI recommendation will be as important as the insight itself.

Ethics and responsibility in the age of automated research

The darker side of research help is real: bias, privacy risks, and even manipulation. As automation rises, so does the risk of “black box” findings nobody can trace or defend.

Checklist for ethical research help use:

- Always disclose your tools and sources.

- Cross-check AI-generated findings with independent data.

- Prioritize privacy and compliance—especially with sensitive data.

- Build in regular “critical review” sessions with diverse team members.

The cultural implications are profound: research help tools shape not just what we know, but what we think is worth knowing. Balance is everything.

The new research literacy: Your edge in a fast-changing world

The gulf between the research literate and illiterate is widening. Those who master both the tech and the human side will dominate; those who resist will fade.

Advice for staying relevant:

- Commit to lifelong learning.

- Participate in cross-disciplinary forums and online communities.

- Don’t just consume—contribute. Share your lessons and pitfalls.

"The best researchers aren’t the fastest—they’re the most adaptable." — Sam, research lead

Appendix: Glossary, resources, and further reading

Glossary: Decoding research help jargon

An AI-powered digital collaborator that augments human research work by automating repetitive tasks, suggesting sources, and highlighting insights in real time.

The combination of skills, knowledge, and critical thinking required to successfully navigate, validate, and synthesize modern information sources.

The process of systematically checking a source’s reliability, accuracy, and relevance to your research question.

A state where the quantity and velocity of incoming information exceed one’s capacity to process or act on it effectively.

AI-driven search techniques that go beyond keywords to understand the context and intent behind queries.

Why understanding jargon matters: Misunderstood terms lead to wasted time, poor tool choices, and bad research outcomes. Cut through the buzzwords by asking vendors and peers to explain how terms work in practice.

Tips: Whenever a research help tool uses a fancy term, demand a demo or case study—not just a glossary definition.

Resources for leveling up your research game

Curated, research-validated resources to boost your research help game:

- World Economic Forum: Future of Jobs Report 2025

- ManTech Publications: Digital Literacy Skills 2025

- StartUs Insights: Modern Technology Guide

- futurecoworker.ai: Research workflow articles

- National University: AI Impact on Research 2025

Choose resources that match your workflow and challenge your assumptions. And remember: revisit this list biannually—new game-changers emerge constantly.

About this article and next steps

This article synthesizes insights from sector-leading reports, live interviews, and hands-on testing of research help platforms. We welcome feedback, criticism, and new case studies—share your experiences to keep the conversation alive.

Platforms like futurecoworker.ai support this knowledge ecosystem by connecting research pros, offering tool reviews, and curating cutting-edge news. Join the community, challenge your workflow, and become impossible to ignore in the new research landscape.

Sources

References cited in this article

- StartUs Insights(startus-insights.com)

- World Economic Forum: Future of Jobs Report 2025(weforum.org)

- ManTech Publications: Digital Literacy Skills 2025(mantechpublications.com)

- National University AI Statistics(nu.edu)

- Shapo.io: Review Statistics(shapo.io)

- Scientific American(scientificamerican.com)

- Elephas: AI Research Assistant Guide(elephas.app)

- Intellecs.ai: Why Traditional Research Methods Are Outdated(intellecs.ai)

- Stanford HAI: 2025 AI Index Report(hai.stanford.edu)

- PwC AI Predictions(pwc.com)

- SSRN: The Cybernetic Teammate Study(papers.ssrn.com)

- MedSearchPro: Better Research Workflow(medsearchpro.com)

- CleverX: Market Research 2025 Guide(cleverx.com)

- Insight7: Modern Thematic Analysis 2025(insight7.io)

- ResearchSolutions: Maximize Your Research Impact in 2025(researchsolutions.com)

- Mobiloitte: AI vs Human Intelligence 2025(mobiloitte.com)

- MIT Sloan: AI Trends 2025(sloanreview.mit.edu)

- ScienceDirect: Collaborative Research Case Study(sciencedirect.com)

- SOA: 2025 Student Research Challenge(soa.org)

- Visualping: Stock Research Tools 2025(visualping.io)

- Shoplazza: Product Research Tools 2025(shoplazza.com)

- Bit.ai: Tools for Researchers 2025(blog.bit.ai)

- Dovetail: 21 Essential Tools(dovetail.com)

- Reader’s Digest: Science Myths 2025(rd.com)

- Ailyze: Busting AI Myths 2025(ailyze.com)

- AllAboutAI: AI Hallucination Report 2025(allaboutai.com)

- Nature: AI Hallucinations(nature.com)

- Editage: Confirmation Bias in Science(editage.com)

- Splashtop: IT Security Best Practices 2025(splashtop.com)

- Phrase: Market Research Best Practices 2025(phrase.com)

- National Literacy Trust: Literacy Research Guide 2025(literacytrust.org.uk)

- Learning Ally: Culture for Literacy Learning 2025(learningally.org)

- IEEE-USA: Upskilling for 2025(insight.ieeeusa.org)

- Horton International: Upskilling in 2025(hortoninternational.com)

Ready to Transform Your Email?

Start automating your tasks and boost productivity today

More Articles

Discover more topics from Intelligent enterprise teammate

Research Expertise in the Age of Ai: Stop Hiring the Wrong Experts

Research expertise is evolving fast. Cut through the hype—discover what defines true research expertise, why most teams get it wrong, and what you must do now.

Research Expert Vs Fake Expert: the New Hiring Test for Enterprises

Discover 7 game-changing truths about research expertise, debunking myths and revealing what enterprises need to thrive. Don’t settle for fake experts.

Research Data Power Plays: Risks, Ethics and AI in 2026

Research data is transforming enterprise collaboration—discover 11 edgy truths, hidden risks, and practical strategies to master research data in 2026.

Research Business in 2026: Why Most Fail—And How a Few Dominate

Research business decoded: Discover bold strategies, hidden pitfalls, and the future of AI-powered research in 2026. Uncover what insiders won't tell you.

The Rise of the AI Research Assistant As Your Next Core Teammate

Discover the surprising evolution of AI-powered teammates redefining enterprise collaboration. Get ahead with actionable insights and expert tips.

Research Assistance Is Becoming Your Most Important Coworker

Discover insights about research assistance

Research Analyst in the Age of Ai: Myth, Reality, and Your Next Move

Expose the myths, unveil the truth, and discover how to future-proof your analyst career in a world ruled by data. Read before your next move.

Research Analysis in 2026: From Costly Bias to Real Advantage

Research analysis in 2026: Discover 11 bold truths, common pitfalls, and expert strategies to transform your results. Don’t repeat costly mistakes—master research analysis now.

Report Writing That Drives Decisions (not Just Gets Filed Away)

Report writing decoded: discover the secrets, myths, and expert hacks behind reports that actually get read. Ditch the fluff. Dominate your next report.