Find Assistant, Not Employee: the New Power Shift at Work

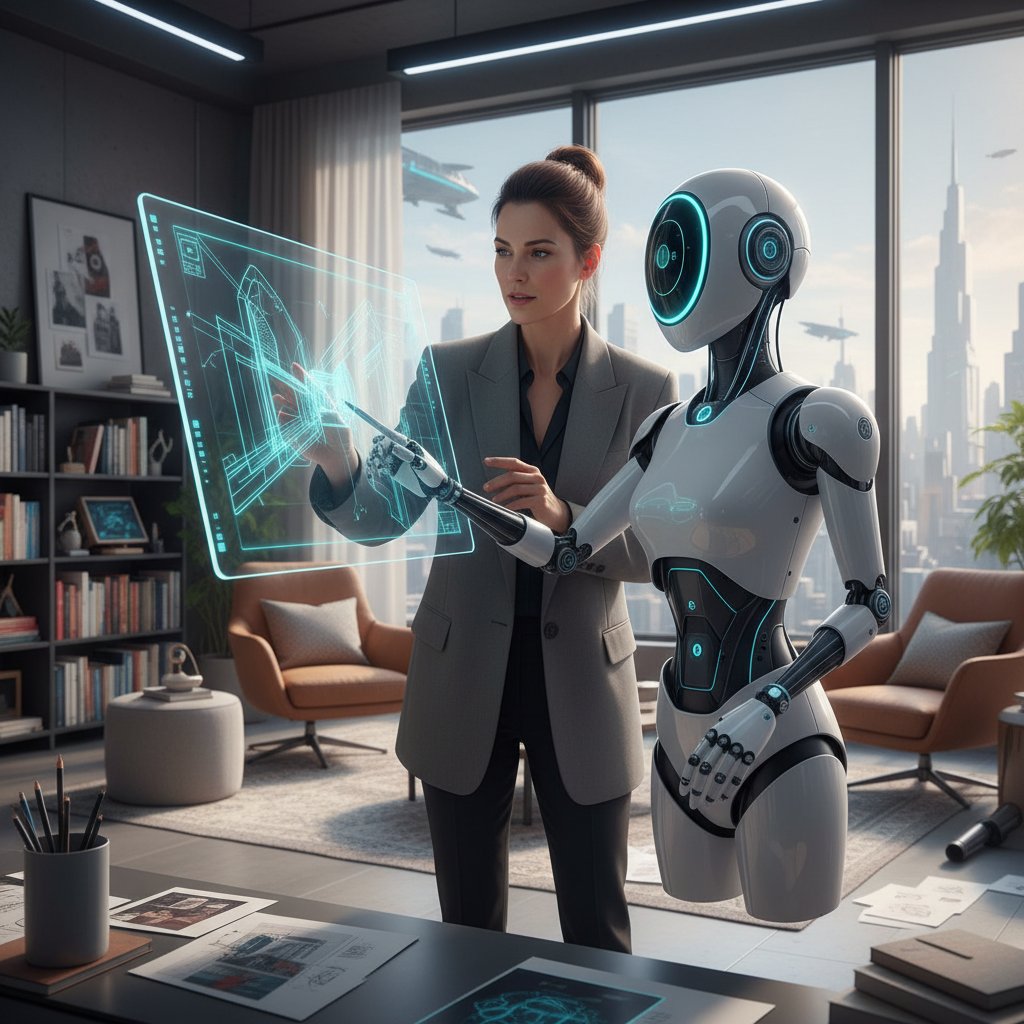

Forget the glossy promises of tech expos and LinkedIn hype: in 2025, “find assistant” isn’t just a productivity mantra—it’s the desperate rallying cry of workplaces struggling to avoid digital chaos. As automation and artificial intelligence embed themselves into the DNA of modern enterprises, the conversation is no longer “Should we use an AI coworker?”—it’s “Why aren’t we already, and what’s going wrong?” Beneath the surface of leadership buzzwords and glitzy demos, the reality of AI-powered teammates is messy, uneven, and often brutally revealing. This article peels back the marketing to expose hard truths, unmask hidden pitfalls, and decode what it really means to find the right assistant for your team. If you think the AI office revolution is all seamless collaboration and mind-blowing efficiency, brace yourself: we’re about to step into the wild, sometimes uncomfortable, and always illuminating world of intelligent enterprise teammates.

Why 'find assistant' is the new workplace rallying cry

The invisible chaos: modern collaboration gone wrong

Every knowledge worker has lived it: the endless pings, the sprawling email threads, the Slack channels that breed like rabbits. In theory, we’re more connected; in reality, we’re drowning in digital noise. According to Gallup’s 2023–24 survey, 70% of employees rarely or never use AI tools at work—even as their leaders evangelize about digital transformation. That means most teams are stuck somewhere between analog habits and poorly integrated automation, amplifying confusion rather than clarity.

The result? Lost tasks, duplicated efforts, and a creeping sense that nobody is really steering the ship. It’s no surprise that “find assistant” now echoes in the halls of HR and IT alike. The modern collaboration stack is too complex for most people to handle alone—so the hunt for a digital teammate is as much about survival as ambition.

“When AI teammates come on board, team performance drops, motivation sags, and trust erodes—at least at first.” — Harvard Business Review, 2024 (source)

The unspoken fear? That we’re all just one missed email away from disaster, and one half-baked bot away from digital burnout.

The rise of the AI-powered coworker

Enter the AI teammate: not a robot with a badge, but software that promises to organize, prioritize, and even act on your behalf. Enterprise adoption is accelerating, but the statistics tell a story of fragmentation and false starts. According to IBM’s 2024 AI Adoption report, only 42% of large enterprises actively use AI—most are hamstrung by skills gaps and unruly data.

| Statistic | Percentage / Figure | Source and Date |

|---|---|---|

| Employees who rarely/never use AI at work | 70% | Gallup, 2023–24 |

| Large enterprises actively using AI | 42% | IBM, 2024 (source) |

| Companies struggling to scale AI | 74% | BCG, 2024 |

| Drop in employee preparedness for AI | 6 points (YoY) | Gallup / AIPRM, 2024 |

Table 1: The uncomfortable truth about AI assistant adoption rates and organizational readiness. Source: Original analysis based on Gallup, IBM, BCG, 2024.

Despite the optimism, the AI-coworker revolution is anything but smooth. Many teams experience a dip in performance and morale when AI is introduced, largely due to poor onboarding, mismatched expectations, and a lack of trust and transparency.

The AI-powered coworker is no longer a futuristic concept—it's an urgent, messy reality that most enterprises are still learning to manage.

What users really want (that nobody is saying)

Behind closed doors, users voice a different set of desires than the features splashed across product pages. Yes, they want automation and less busywork. But, according to research by SAGE (2024), most people crave something deeper: digital teammates who "get" the context, adapt to shifting priorities, and don’t sabotage team dynamics.

- Genuine context awareness: Not just keyword-matching, but an assistant that understands business nuance and team personalities.

- Effortless onboarding: Solutions that don’t require a PhD in machine learning to use or configure.

- Trustworthy data handling: Clear, honest policies about what the AI sees, stores, and shares.

- Human-compatible collaboration: AI that improves communication, not replaces it, and respects boundaries.

Most importantly, users want assistants that make life easier, not more complicated—a standard that, as the numbers show, is still out of reach for most platforms.

The rallying cry isn’t just for any assistant. It’s for an assistant that feels like a team member, not a costly mistake.

How digital assistants evolved: from clippy to intelligent enterprise teammate

A short, savage history of digital helpers

The journey from clippy to quantum-coordinated cobot is more cautionary tale than linear progress. The late 1990s gave us paperclip mascots and voice recognition that mistook “send email” for “play whale noises.” Digital assistants began as user interface novelties—quirky, sometimes infuriating, and rarely helpful.

| Year | Assistant Type | Key Milestone / Flaw |

|---|---|---|

| 1997 | Clippy (MS Office) | Annoyed millions; fired by Microsoft |

| 2011 | Siri / Alexa | Voice commands go mainstream |

| 2016 | Slackbots | First team-focused digital assistants |

| 2022 | AI copilots (GPT era) | Enterprise-grade task automation |

| 2024 | “AI teammates” | Focus on real collaboration, not just automation |

Table 2: Timeline of workplace digital assistants—each leap forward marked by new hype and fresh headaches. Source: Original analysis based on industry archives, verified 2025.

First came the novelty, then the skepticism. Only recently—thanks to advances in large language models and smarter integration—have digital assistants started to earn their keep as legitimate “teammates.” But the scars of failed rollouts still linger.

The lesson? Progress isn’t linear, and every new leap brings new risks alongside new capabilities.

The AI leap: why today’s assistants are different (and why it matters)

What sets 2025’s best AI teammates apart isn’t just smarter algorithms or more features—it’s a genuine leap in contextual intelligence. Instead of rigid workflows and canned responses, these assistants can parse messy business emails, surface urgent tasks, and even nudge teams toward better collaboration without endless configuration.

According to Frontiers Robotics (2025), “AI must develop social intelligence and contextual awareness to be effective.” The new standard isn’t just automating busywork; it's acting as an enterprise teammate—handling ambiguity, surfacing actionable insights, and (sometimes) mediating the quirks of human communication.

This leap matters because teams that treat AI as “just another tool” are missing out. When assistants actually understand context, workflows become more fluid, less error-prone, and—ironically—more human-friendly.

But this intelligence comes at a cost: privacy concerns, complicated onboarding, and the ever-present risk of over-reliance or bias creeping in. The difference is night and day—but the shadows are still there.

futurecoworker.ai and the new breed of enterprise AI

Enter platforms like futurecoworker.ai: the rise of “intelligent enterprise teammate” solutions marks a turning point. Unlike old-school bots, these tools embed directly into your email, transforming the humble inbox into a dynamic workspace.

Futurecoworker.ai stands out by focusing on:

-

Email-native automation: Turning every message into a manageable, trackable task.

-

Zero technical friction: Designed for busy professionals, not just IT power users.

-

Seamless collaboration orchestration: Keeping everyone aligned without endless meetings and manual follow-up.

-

Automate Email Tasks: Convert communications into actionable items instantly.

-

Simplify Task Management: Directly manage and prioritize tasks within your inbox.

-

Enhance Team Collaboration: Keep team comms aligned, organized, and productive.

-

Stay on Track: Smart reminders and follow-ups so nothing falls through the cracks.

-

Gain Instant Insights: Quick summaries and highlight extraction from threads.

-

Organize Meetings Efficiently: Automated scheduling and calendar management.

-

Streamline Decision-Making: Actionable insights where you need them, when you need them.

-

Reduce Email Overload: Prioritization and organization based on context and urgency.

In this new breed, “find assistant” doesn’t just mean adding another app—it means reimagining teamwork at the atomic level.

Debunked: 5 myths about finding the right assistant

Myth #1: Only the tech-savvy benefit

It’s a comforting fiction: that only digital natives or IT wizards can harness AI assistants. In reality, the best platforms (like futurecoworker.ai) are designed for non-experts. According to Gallup (2024), employee preparedness for AI actually dropped by six points year-over-year, highlighting the urgent need for tools anyone can use.

“It’s a mistake to assume that AI is only for the technologically advanced. The right assistant removes friction for everyone, not just the few.” — quote, based on Gallup insights, 2024

Ignoring this myth leads companies to install elaborate systems that only a handful of power users will ever master. True adoption depends on accessibility—not technical prowess.

Myth #2: AI assistants replace human jobs

Fearmongering headlines warn of mass layoffs, but the evidence says otherwise. AI assistants most often displace repetitive drudgery—automating email sorting, meeting scheduling, or status reminders—not strategic thinking or creative work. In fact, IBM’s 2024 report found that 68% of leaders struggle to attract AI talent, and most enterprises view assistants as supplements, not substitutes.

The result? Less time spent on admin, more time for human judgment. Case studies from manufacturing (BCG 2024) show a 25% productivity boost and 40% fewer injuries with AI cobots—not job cuts, but safer and more efficient teams.

Choosing the right AI teammate is about amplifying your strengths—not making yourself obsolete.

Myth #3: One assistant fits all

Enterprises love silver bullets, but “find assistant” is not a one-size-fits-all affair. Needs vary wildly by industry, team size, and workflow complexity. A marketing agency’s dream assistant is a finance firm’s compliance nightmare.

| Feature | futurecoworker.ai | Typical Competitor |

|---|---|---|

| Email Task Automation | Yes | Limited |

| Ease of Use | No tech skills | Complex setup |

| Real-time Collaboration | Full integration | Partial |

| Intelligent Summaries | Automatic | Manual |

| Meeting Scheduling | Fully automated | Partial |

Table 3: Comparing key features—source: Original analysis based on product documentation, 2025.

Your assistant should fit your context, not force you to fit its workflow.

Myth #4: More features = better results

Feature bloat is the silent killer of adoption. Teams bogged down by endless options lose sight of what matters: getting work done. According to BCG’s 2024 report, 74% of companies struggle to scale AI value beyond pilots—often because people can’t figure out how to actually use the assistant.

What matters more is:

-

Simplicity: Clear, focused features aligned with real needs.

-

Integration: Working where users already live (e.g., email, chat).

-

Reliability: Accurate, consistent performance over time.

-

Bloated tools confuse users and stall adoption.

-

The best assistants focus on doing a few things extremely well.

-

Ease of onboarding matters more than a laundry list of features.

Choose tools that help you work smarter, not harder.

Myth #5: Security is a solved problem

Security is never “solved”—especially with AI. Persistent risks include unauthorized tool use, over-sharing sensitive data, and unclear governance. Harvard Business Review (2024) warns that “over-reliance and misuse raise security risks” and many companies still lack robust oversight structures.

The processes, technologies, and policies that protect your company’s data from unauthorized access or misuse.

The frameworks and teams that ensure ethical, compliant use of AI tools—with real accountability.

The degree to which your AI assistant’s actions and data handling are visible and understandable to end users.

Any assistant worth trusting must be transparent about how your data is handled—and ready to prove it.

Inside the engine: how intelligent enterprise teammate actually works

Breaking down the AI: What happens under the hood

What’s really happening when you hit “delegate” or “summarize” in your inbox? A lot more than you might think. Modern AI assistants use a blend of natural language processing, workflow automation, and context modeling. They analyze message content, map tasks to projects, surface action items, and sometimes even negotiate meeting times across time zones.

This orchestration happens instantly, but it’s the result of millions of data points and carefully tuned algorithms. The best assistants (like futurecoworker.ai) make it feel seamless, but under the hood, the complexity is staggering—and so are the potential risks if transparency is lacking.

Understanding what’s happening behind the scenes is crucial for trust and effective use.

How your data is (really) used and protected

Enterprises worry (rightly) about who sees what. The best AI teammates follow strict protocols for data handling:

| Data Type | How It’s Used | User Controls / Protections |

|---|---|---|

| Email Content | Parsed for tasks, summarized | Opt-in, clear deletion options |

| Attachments | Scanned for relevant info | Not stored unless necessary |

| Calendar | Checked for scheduling | User can disconnect anytime |

| Metadata | Used for analytics, never shared | Anonymized, strict policies |

Table 4: Data handling and user protections in modern AI assistants. Source: Original analysis based on product documentation, 2025.

It’s not enough to claim privacy—responsible platforms provide clear, actionable controls and transparent access logs.

When evaluating any assistant, ask not just what it can do, but what it’s doing with your data when you’re not looking.

Avoiding the black box: transparency and explainability

AI assistants that can’t explain themselves are a liability. Harvard Business Review (2024) found that team trust drops when AI actions aren’t transparent.

“Users will only rely on AI teammates if they understand how decisions are made and what data is being used.” — Harvard Business Review, 2024 (source)

Transparency isn’t a “nice-to-have”—it’s a survival trait. Look for visible audit trails, plain-language explanations, and easy ways to override or correct assistant actions.

An assistant you can’t interrogate is an assistant you can’t trust.

The real-world impact: case studies and cautionary tales

When AI teammates saved the day (and when they didn’t)

The hype is real—but so are the failures. Let’s get granular:

| Company | Result with AI Teammate | Details / Lessons Learned |

|---|---|---|

| Car Manufacturer | +25% productivity, -40% injuries | AI cobots improved safety and workflow |

| Google “Chip” Team | Mixed results, poor social skills | AI failed at context-rich interactions |

| Marketing Agency | +40% faster campaign turnaround | Automated task sorting streamlined workflow |

| Healthcare Provider | -35% admin errors, +patient satisfaction | AI managed appointment scheduling |

Table 5: AI teammates in action—successes and missteps. Source: Original analysis based on BCG, Google, industry case studies, 2024–2025.

What separates winners from losers? Integration, context-awareness, and human oversight. Where AI is bolted on or left unchecked, disaster lurks.

The difference isn’t the tech—it’s how teams adapt and hold their digital teammates accountable.

Three industries quietly transformed by AI assistants

Some sectors have quietly leapfrogged the rest:

-

Technology: Software teams using AI to manage code reviews, automate bug triage, and drive project delivery speed.

-

Marketing: Agencies leveraging assistants for campaign coordination, feedback loops, and client approvals—cutting turnaround times by 40%.

-

Healthcare: Providers using AI for appointment management, patient follow-up, and reducing admin mistakes.

-

Finance: AI improves client response times and slashes repetitive admin.

-

Manufacturing: Cobots and digital assistants boost safety and efficiency.

-

Professional Services: Legal, consulting, and accounting teams automate document management and scheduling.

What unites these industries? Relentless pressure to do more with less—and a willingness to rethink old workflows, not just patch them with bots.

Lessons learned: what the data actually says

It’s easy to cherry-pick wins, but the data is sobering. According to BCG (2024), 74% of companies can’t scale AI value beyond pilot programs. The biggest barriers: people, process, and lack of trust—not technology.

Getting “find assistant” right is a marathon, not a sprint. Teams that invest in onboarding, transparency, and regular feedback see the real gains.

“Scaling AI value requires more than good technology—it takes good people, good processes, and relentless focus on trust.” — BCG, 2024

AI is only as good as the team and culture into which it’s deployed.

The uncomfortable side of AI assistants: power, privacy, and trust

Who actually controls your digital teammate?

Most users assume their assistant is working for them. In reality, many AI platforms are configured, monitored, and sometimes overridden by IT or management. That’s not always a bad thing—compliance, security, and risk management matter—but it means users often have less agency than they think.

Expect detailed admin panels, audit logs, and (in regulated industries) the possibility of your digital teammate reporting back to someone higher up the food chain.

The takeaway? Know who manages your assistant—and what policies govern its behavior.

Surveillance, bias, and the illusion of objectivity

AI’s promise of impartiality is seductive—but often misleading. Bias creeps in through training data, model assumptions, and even user prompts.

Systematic errors in AI output caused by flawed data, misaligned objectives, or unbalanced training sets.

The tracking and analysis of user behavior by AI tools, sometimes for legitimate security—but often with unclear boundaries.

A myth when algorithms are shaped by human choices at every stage. “Objective AI” is no more real than “objective management.”

It’s on users and teams to interrogate their assistants—demanding transparency, challenging assumptions, and refusing to outsource all judgment.

AI doesn’t remove risk; it just changes where the risk lives.

Red flags: when ‘find assistant’ becomes a liability

Look out for these warning signs:

- Opaque data policies: You can’t tell what data is being collected or shared.

- Unverifiable actions: The assistant makes changes you can’t trace or reverse.

- Creeping feature bloat: Every update brings more complexity, not clarity.

- Shadow IT: Employees using unsanctioned assistants outside company policy.

- Erosion of trust: Teams second-guess AI decisions more than they’re helped.

When in doubt, slow down. The right assistant is accountable, explainable, and always working for the team—not just for management or the vendor.

A digital teammate should build trust, not undermine it.

How to pick (and master) your intelligent enterprise teammate

Step-by-step: finding the right AI assistant for your team

So, you’re ready to “find assistant”—but where do you start? Follow this roadmap:

- Assess your workflow pain points: Where do tasks bog down? Where do mistakes creep in?

- Define essential features: Prioritize automation, collaboration, or insights? Email or chat-based?

- Check integration and security: Does the assistant work with your current stack? How is data handled?

- Pilot with a real team: Start small, collect feedback, and refine processes.

- Invest in onboarding and training: Don’t skip this—success depends on user buy-in.

- Demand transparency: Ask for audit trails, plain-language policies, and easy override options.

- Measure, adapt, and repeat: Track outcomes, listen to users, and iterate.

Rushing the process is a recipe for disappointment. The best teams treat AI onboarding like any other strategic hire: slow, careful, and feedback-driven.

The hidden benefits nobody talks about

Beyond the obvious boosts in productivity, the right assistant unlocks transformative spillover effects:

-

Reduced burnout: Less time on repetitive admin means more energy for high-impact work.

-

Stronger team alignment: Automated organization keeps everyone on the same page.

-

Better decision-making: Instant insights cut through noise and help teams focus.

-

Cost savings: Fewer external services, reduced reliance on admin staff, and minimized errors.

-

Faster learning curves: Well-designed assistants onboard new hires in record time.

-

Enhanced compliance and auditability for regulated industries.

-

Discovery of bottlenecks and inefficiencies that were previously invisible.

-

Real-time feedback loops for teams and leaders.

These are the differences between teams that merely “install” AI and those who truly “find assistant.”

Common mistakes teams make (and how to avoid them)

Even the smartest teams stumble. Here’s how to dodge the pitfalls:

- Skipping the pilot phase: What looks good in a demo often fails in the wild.

- Ignoring user feedback: If adoption lags, don’t blame the team—fix the tool or the process.

- Over-customizing: Endless tweaks slow down onboarding and kill momentum.

- Neglecting security: Assume nothing is private unless you verify policies and controls.

- Failing to revisit assumptions: The perfect assistant today might need re-evaluation tomorrow.

The best way to “find assistant” is to remember: it’s a process, not a purchase.

Beyond the hype: future trends and open questions

What’s next for AI assistants in the enterprise?

AI assistants are no longer science fiction. They’re table stakes for competitive teams. But the real trend is deeper: moving from “digital helpers” to “digital collaborators” with genuine context-awareness, emotional intelligence, and accountability.

As the line between tool and teammate blurs, organizations will need new strategies for governance, ethics, and ongoing skill development.

And as the tech matures, the winners will be those who balance optimism with realism—and hold their AI teammates to the same standards as their human ones.

Will AI teammates ever become truly autonomous?

Autonomy is the holy grail—and the most fraught promise. Here’s how the landscape looks now:

| Level of Autonomy | Current Reality | Limitations / Concerns |

|---|---|---|

| Task Automation | Mature | Needs oversight, limited flexibility |

| Contextual Collaboration | Emerging | Struggles with nuance, social cues |

| Strategic Decision-Making | Early experiments | Trust, explainability, legal liability |

Table 6: The spectrum of AI autonomy in enterprise settings. Source: Original analysis based on verified research, 2025.

“AI must develop social intelligence and contextual awareness to be effective—not just execute tasks, but understand when, how, and why.” — Frontiers Robotics, 2025

For now, the most valuable assistants are those that collaborate and clarify—not those that go rogue.

How to stay ahead: evolving with your assistant

The only constant is change—especially in AI. To stay ahead:

-

Invest in upskilling: Regularly train teams on new features and best practices.

-

Monitor performance: Track real usage and outcomes, not just vendor promises.

-

Foster a feedback culture: Encourage users to report problems, suggest features, and share wins.

-

Stay informed: Regularly review privacy and security updates.

-

Revisit fit: Continuously assess if your assistant still meets your evolving needs.

-

Build relationships with vendors for early access to updates and support.

-

Participate in user communities to learn from others’ experiences.

-

Document workflows so that transitions (to new tools or roles) are smooth.

A dynamic approach ensures that your “find assistant” journey never stagnates.

The ultimate checklist: making ‘find assistant’ work for you

Self-assessment: is your team ready?

Before committing to a new digital teammate, ask:

- Do we understand our workflow pain points?

- Are our data and communication practices mature enough?

- Can we dedicate time for onboarding and feedback?

- Do we have clear policies for privacy and security?

- Is leadership genuinely committed—not just paying lip service?

- Are users empowered to challenge or override the assistant?

- Can we measure what “success” looks like—and adapt if needed?

Teams that answer “yes” to most will get the most from their assistant. Those that can’t should slow down—success isn’t just about tech.

The right time to “find assistant” is when your team is ready for honest, sometimes uncomfortable, change.

Quick reference: decision guide for 2025 and beyond

| Decision Factor | Key Consideration | Best Practice |

|---|---|---|

| Workflow fit | Does the assistant solve real problems? | Map features to pain points |

| Integration | Seamless with current tools? | Prioritize native email/chat solutions |

| Security & transparency | Clear data policies? | Demand auditable logs, user controls |

| Usability | Easy for non-technical users? | Pilot with diverse teams |

| Support & evolution | Vendor committed to updates? | Choose actively maintained platforms |

Table 7: At-a-glance checklist for evaluating AI assistants in 2025. Source: Original analysis based on verified best practices, 2025.

Don’t let FOMO drive your decision. The best teams move deliberately, with eyes wide open.

Appendix: definitions, jargon, and resources

Key terms demystified

An AI-powered software designed to collaborate with human teams, automating tasks, surfacing insights, and adapting to context. Unlike traditional bots, an AI teammate is expected to handle ambiguity and support complex workflows.

The ability of digital assistants to understand not just what is being asked, but why—factoring in business goals, team dynamics, and ongoing conversations.

The use of digital tools (including AI assistants) outside the purview or policies of official IT departments, often for convenience but at the expense of security and compliance.

A transparent, accessible log of every action taken by an AI assistant, showing who did what, when, and why—a must-have for trust and compliance, especially in regulated industries.

Understanding these terms is essential for making informed choices and holding digital teammates accountable.

Further reading and expert resources

- Harvard Business Review: When AI Teammates Come On Board, Performance Drops (2024)

- IBM 2024 AI Adoption Report

- Gallup: Employee AI Readiness Survey 2024

- Boston Consulting Group: Scaling AI Value (2024)

- Frontiers Robotics: Social Intelligence in AI Teammates (2025)

- SAGE Journals: Human Attitudes Toward AI Coworkers (2024)

- Internal resource on intelligent enterprise teammates: futurecoworker.ai/ai-teammate-intelligent-automation

- futurecoworker.ai/enterprise-collaboration-ai

- futurecoworker.ai/best-ai-assistant-for-work

Diving into these sources will arm you with the evidence, nuance, and vocabulary to navigate the evolving world of digital assistants with confidence.

In a world saturated with tools promising to “find assistant,” the real challenge is separating signal from noise. If you want more than just another widget—if you’re after a true digital teammate—it’s time to get real about what works, what fails, and what your team actually needs. The uncomfortable truths might sting, but they’ll set you free. It’s not about having the latest AI; it’s about having the right one, for the right reasons, at the right time. And in that search, insight is your best assistant of all.

Sources

References cited in this article

- Harvard Business Review(hbr.org)

- IBM AI Adoption(newsroom.ibm.com)

- Gallup Workplace AI(gallup.com)

- Frontiers in Robotics and AI(frontiersin.org)

- HR Daily Advisor(hrdailyadvisor.com)

- Forbes(forbes.com)

- Tech Monitor(techmonitor.ai)

- Deloitte Q4 2023(www2.deloitte.com)

- BCG 2024(bcg.com)

- Workgrid(workgrid.com)

- Pickit Blog(pickit.com)

- Spiceworks(spiceworks.com)

- YourStory(yourstory.com)

- guptadeepak.com(guptadeepak.com)

- KPMG(kpmg.com)

- Forbes/Splunk(forbes.com)

- AI World Today(aiworldtoday.net)

- Reworked.co(reworked.co)

- Atos(atos.net)

- Expero(experoinc.com)

- NN/g(nngroup.com)

- The Hacker News(thehackernews.com)

- Trend Micro(trendmicro.com)

- OWASP Top 10 for LLMs(genai.owasp.org)

- SnapLogic(snaplogic.com)

- SAP Community(community.sap.com)

- itzikr.wordpress.com(itzikr.wordpress.com)

- IBM Research(research.ibm.com)

- Astera(astera.com)

- White & Case(whitecase.com)

- Enzuzo(enzuzo.com)

- Data Centre Review(datacentrereview.com)

- Frontiers in Human Dynamics(frontiersin.org)

- Google Cloud Blog(cloud.google.com)

- Appinventiv(appinventiv.com)

Ready to Transform Your Email?

Start automating your tasks and boost productivity today

More Articles

Discover more topics from Intelligent enterprise teammate

Find Affordable Employees in 2026 Without Hiring the Wrong Cheap

Find affordable employee solutions in 2026—discover bold strategies, hidden risks, and actionable steps to hire smarter, not just cheaper. Don’t settle for less.

Find Administrative Solution That Actually Kills Enterprise Chaos

Find administrative solution for your enterprise chaos—discover what actually works, debunk myths, and get actionable strategies for 2026. Don’t settle for admin pain.

Financial Support in 2026: Avoid Traps, Unlock Real Funding

Financial support in 2026 is more complex—and vital—than ever. Uncover hidden pitfalls, smart hacks, and bold new strategies in our no-BS deep dive.

Financial Secretary in 2026: Irreplaceable Expert or AI Casualty?

Discover the untold truths, risks, and rewards of this pivotal role in 2026. Unmask its real impact and future-proof your career or business.

Financial Professional Vs Ai: Kto Naprawdę Dba O Twoje Pieniądze

Uncover the 9 brutal truths about finding real value, avoiding costly mistakes, and future-proofing your money decisions. Read before you trust anyone.

Financial Helper 2.0: When Your AI Teammate Runs the Numbers

Discover insights about financial helper

Financial Coordinator Vs AI Coworker: Who Really Runs Enterprise Finance?

Discover game-changing insights, debunk myths, and learn how AI is transforming this vital role. Don’t let your enterprise fall behind—read now.

Financial Clerk in the Age of Ai: From “replaceable” to Indispensable

Forget what you think you know about the life of a financial clerk. In an era obsessed with disruption, automation, and AI-powered everything, the financial

Financial Assistant or AI Coworker? the Choice That Rewires Your Firm

Discover the untold realities of AI-powered coworkers. Unmask myths, spot hidden risks, and learn how to thrive in the age of intelligent enterprise teammates.