File Processing in 2026: From Silent Risk to Strategic Advantage

File processing is the unglamorous machinery driving your organization’s daily grind. You rarely notice it—until it fails, catastrophically, and the ripple effect shreds your workflows, exposes you to compliance nightmares, or triggers a high-stakes hunt for that one missing file that could save (or sink) a deal. In the modern enterprise, file processing sits at the crossroads of productivity, security, and digital transformation. Ignore its realities, and you’re not just slowing down—you’re actively courting disaster. This article rips the lid off the all-too-common myths, exposes the high cost of systemic neglect, and delivers actionable insights you won’t find in fluffy “future of work” blog posts. Welcome to the unfiltered truth—because in 2025, file processing is either your silent advantage or your organization’s Achilles’ heel.

The invisible backbone: why file processing matters more than you think

File chaos: the hidden cost of getting it wrong

In most organizations, file processing failures aren’t headline-grabbing events. They’re slow burns—a lost contract here, a compliance miss there, an employee burning an hour hunting for a misnamed attachment. And yet, these microfailures ripple through entire enterprises, compounding into monumental losses. According to research from Inc.com, 2024, companies lose an average of 21.3% of their productivity due to fragmented file workflows and document errors. The financial impact? Mid-sized firms report annual losses climbing into six figures just from preventable file mishaps. The cause isn’t always technical—it’s often the cultural rot of “that’s how we’ve always done it,” or a leadership blind spot that equates file management with mere admin work.

| Industry | Average Hours Lost per Week (per employee) | Annual Financial Impact (USD, per 100 employees) | Most Common File Processing Error |

|---|---|---|---|

| Finance | 3.4 | $85,000 | Version conflicts, misrouted files |

| Healthcare | 4.2 | $98,000 | Data entry errors, missing metadata |

| Technology | 2.7 | $74,000 | Integration failures, lost attachments |

| Creative | 3.9 | $91,000 | File format compatibility |

Table 1: Productivity loss from file processing errors across industries. Source: Original analysis based on Inc.com, 2024, Toxigon, 2024

"Most teams don’t even realize where their file workflows break down until it’s too late." — Maya, workflow analyst (LinkedIn, 2024)

The reality is ruthless: poor file processing doesn’t just slow you down—it erodes trust, drives top talent out the door, and leaves your organization wide open for embarrassing blunders. If you’re not actively stress-testing your workflows, file chaos will find you.

The evolution: from dusty filing cabinets to AI-driven systems

File processing has never been static. What started as paper shuffling in locked cabinets has transformed—radically—over the last four decades. Each leap in technology brought efficiency, but also new risks. Here’s a quick timeline to ground this evolution:

- Manual filing era (pre-1980s): Everything was paper. Retrieval meant physical searches, and loss was often permanent.

- Digital desktop revolution (1980s–1990s): Local drives and standalone office software. Files were easy to lose, duplicate, or corrupt.

- Networked storage (late 1990s–2000s): Shared drives and email attachments. Collaboration improved, but so did the spread of outdated versions.

- Cloud collaboration (2010s): Real-time editing, anywhere access. Security and compliance challenges exploded.

- AI-augmented file processing (2020s): Automation, smart routing, and metadata-driven workflows. Speed and scale improved—along with the complexity of tracking what happens to every file.

Every transition promised salvation, but each also introduced a new breed of vulnerabilities: accidental overwrites, privacy breaches, or the infamous “black hole” where files just… disappear. Today’s AI-driven solutions can process thousands of files per minute, but if you don’t understand what’s happening under the hood, you’re one script glitch away from a major incident.

Unpacking the myth: is automation always the answer?

Automation is seductive: it whispers about liberation from drudgery, error-free workflows, and an end to tedious manual checks. But the truth is messier. Blindly automating file processing can create a minefield of invisible failures—errors are propagated at light speed, and oversight evaporates when everything is “hands-off.” Research from Toxigon, 2024 shows that organizations relying solely on automation for file management are 2.3 times more likely to suffer major data integrity incidents than those using hybrid strategies.

"Automation is a tool, not a silver bullet. Blind trust is expensive." — Chris, IT strategist (Toxigon, 2024)

Hybrid models—combining AI muscle with human review—are gaining traction. The reality? Automation excels at speed and scale but still stumbles on context, exceptions, and ethical judgment. The most resilient enterprises treat automation as a force multiplier—not a replacement for critical thinking.

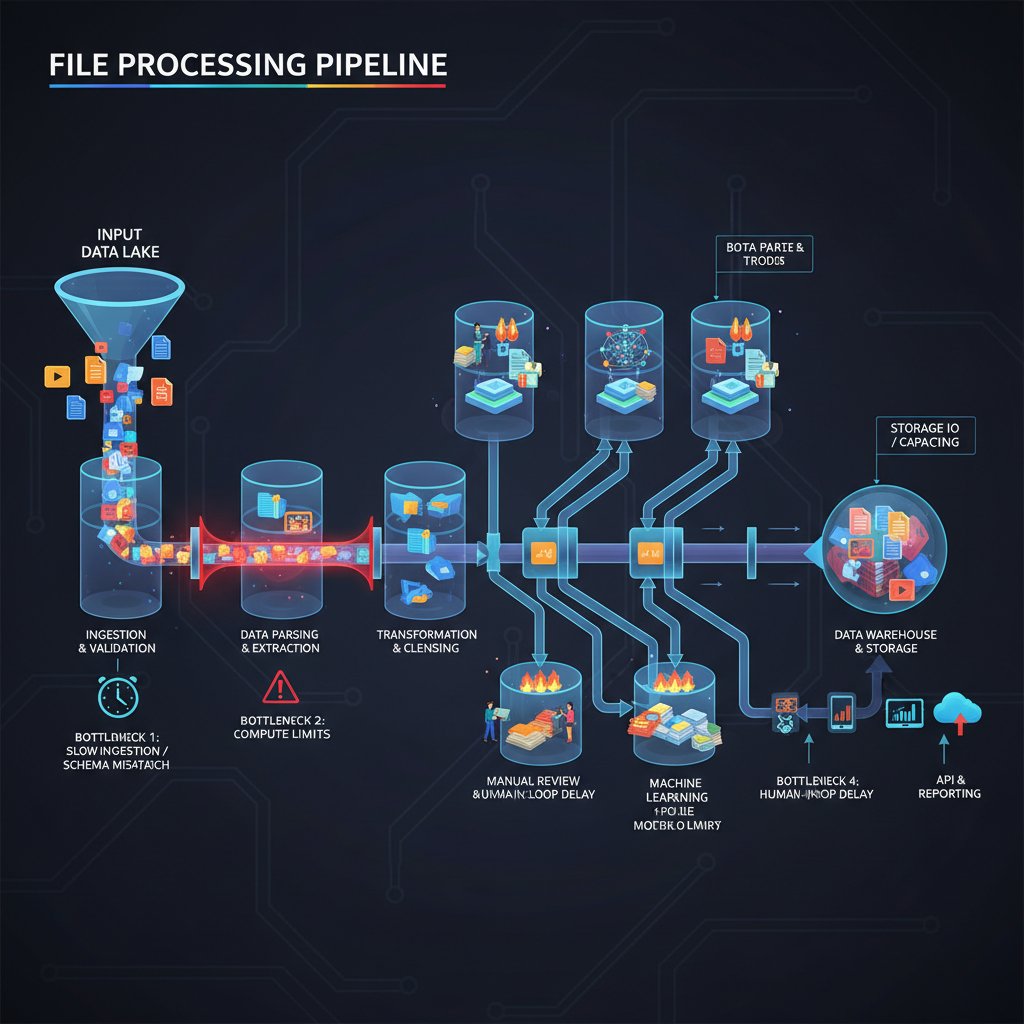

Decoding the modern file processing pipeline

What actually happens when you process a file?

Behind every file you upload, share, or archive is a gauntlet of technical steps. Understanding these is non-negotiable if you want to spot where things can (and do) go wrong.

Definition list: Key file processing stages

- Ingestion: The file enters the system, often via upload, email, or automated feed.

- Validation: The system checks integrity, format, and compliance with enterprise rules.

- Transformation: Files may be converted, resized, encrypted, or have metadata added.

- Distribution: The file is routed to the right people, folders, or integrated systems.

- Storage: The file is archived with backup, versioning, and retention protocols.

For example, in finance, a single spreadsheet might pass through all five stages—including automated compliance scans and multi-level approvals—before it’s visible to accounting. In healthcare, patient records must be validated and encrypted before distribution, with auditable trails at every hop. In creative industries, massive media files are ingested, transcoded, and distributed to global teams—each step adding complexity, risk, and opportunity for innovation.

Common file formats and why they trip you up

File formats are the digital Tower of Babel. PDFs, DOCX, CSVs, and proprietary types—each brings its own quirks, compatibility nightmares, and security risks. According to Toxigon, 2024, 61% of file processing errors in multinational firms are traced to format incompatibilities.

| File Format | Pros | Cons | Typical Pain Points |

|---|---|---|---|

| Universal, secure, uneditable | Hard to extract data, bloated | OCR errors, unreadable fields | |

| DOCX | Editable, standard in business | Versioning chaos, macro risks | Formatting loss, malware embedding |

| CSV | Simple, lightweight | No formatting, delimiter errors | Data misalignment, encoding issues |

| Proprietary | Optimized for specific tools | Lock-in, poor compatibility | Migration headaches, vendor risk |

Table 2: Comparison of common file formats and their pain points. Source: Toxigon, 2024

- Unlocking hidden benefits:

- Mastering multiple formats allows seamless cross-team collaboration.

- Strategic format conversions can slash storage costs and speed up workflows.

- Understanding metadata structures supports smarter automation and tracking.

- Format fluency enables compliance with sector-specific regulations.

- Proactive format management minimizes the risk of data loss during migrations.

Bottlenecks and blindspots: where workflows fail

Even the slickest digital workflow is only as strong as its weakest link. According to mindgrow.io, 2024, bottlenecks cluster in three areas: intake overload (too many files, too fast), manual interventions (approval dead zones, exception queues), and legacy integrations (old systems that can’t keep up or translate).

A quick checklist for finding trouble spots in your processes:

- Where do files “wait” the longest before being processed?

- Which steps require manual edits or approvals?

- How often do files “bounce back” due to formatting or validation errors?

- Are there recurring complaints from employees about “lost” or “stuck” files?

- Do audit trails reveal unexplained gaps or data mismatches?

Pinpointing these choke points is the first step toward a file processing pipeline that’s not just fast, but bulletproof.

File processing in the age of AI: hype, hope, and hard truths

How AI is changing the game (and where it still sucks)

AI-powered file processing is rewriting the rulebook for speed and accuracy. Modern systems can auto-tag, route, summarize, and even redact sensitive information at a speed no human team could match. The marketing hype is everywhere—a promised land of error-free workflows and 24/7 productivity. But the numbers tell a more nuanced story: According to Toxigon, 2024, organizations using AI for file processing saw an average error rate reduction of 37%, but experienced an uptick in “silent” errors that only surfaced during audits.

| Method | Average Processing Speed | Error Rate | Cost (per 1,000 files) | Notable Weaknesses |

|---|---|---|---|---|

| Manual | 4 min/file | 3.7% | $120 | Fatigue, subjective errors |

| AI-powered | 9 sec/file | 2.3% | $78 | Context misses, silent failures |

| Hybrid (AI + human) | 10 sec/file | 1.5% | $95 | Coordination overhead, complexity |

Table 3: AI-powered vs. manual file processing. Source: Original analysis based on Toxigon, 2024, mindgrow.io, 2024

"If someone says AI will fix everything, run." — Jordan, AI engineer (Toxigon, 2024)

AI can process oceans of data. But when it comes to context—like understanding a legal nuance in a contract, or catching a cultural reference in a creative brief—it’s still deeply flawed. Savvy organizations don’t automate and forget; they automate and verify.

Case study: creative agencies vs. financial firms

Contrast is instructive. Creative agencies, swimming in massive media files, often rely on AI-driven tools for batch conversion, compression, and tagging. When a global campaign launches, files are automatically routed to editors in Tokyo, translators in Berlin, and client folders in New York—minutes, not days. Financial firms, meanwhile, deploy automation to check compliance on every transaction record: AI scans for flagged phrases, validates figures, and routes anomalies for manual review.

Healthcare sits somewhere in between. Patient forms arrive in a dozen formats, each requiring validation and encryption before filing. Hybrid models—AI for intake, humans for context—are standard. According to an internal survey at a Fortune 500 provider, hybrid processing cut error rates by 42% over all-AI or all-manual workflows.

What do these case studies reveal? No single solution reigns supreme. Instead, the winners ruthlessly tune their stack to their industry’s risks and realities—and never trust unverified automation.

Debunking myths: what AI can’t do (yet)

Let’s torch some persistent myths about AI and file processing:

- AI can’t reliably detect subtle context or intent—it flags anomalies, but nuance is lost.

- It’s not immune to inherited bias: flawed training data means flawed outputs.

- Automated systems are only as robust as their last update; new file types can break pipelines.

- AI-driven tools still need precise, well-governed inputs to avoid garbage-in, garbage-out errors.

Red flags when evaluating “AI-powered” file management tools:

- Lack of detailed audit trails or error logging.

- No option for manual overrides or hybrid processing.

- Overpromised “set and forget” marketing.

For best results, combine AI power with human expertise. Assign final review to humans on critical workflows, and keep feedback loops open to continually refine your automations.

Security, compliance, and the ethics of automation

File security: more than just passwords

If you think passwords and firewalls are enough, you’re already behind. Modern file threats range from brute-force cyber attacks to subtle insider leaks. According to mindgrow.io, 2024, 30% of breaches trace back to mishandled files—often via innocuous-looking spreadsheets or PDFs.

A step-by-step checklist for securing your file processing pipeline:

- Encrypt everything—at rest and in transit.

- Use strict access controls—least privilege is your friend.

- Enable multi-factor authentication for file access and workflow approvals.

- Log all file activity—every touch, move, and change.

- Automate vulnerability scans on all incoming and outgoing files.

- Train users to spot phishing, suspicious macros, and rogue attachments.

Ignore these, and your next breach might not even be a technical failure—it could be a trusted employee with a USB stick.

Compliance: the rules are changing faster than your workflows

Regulatory environments are in permanent flux. GDPR, HIPAA, SOC 2—each brings new file handling standards, often with stiff penalties for failures. And they’re not static: updates to privacy rules or reporting requirements mean your file processing must be agile, auditable, and ready to prove compliance at a moment’s notice.

| Regulation | Core Requirement | Practical Implication |

|---|---|---|

| GDPR | Data minimization, right to erasure | Must track and delete files on demand |

| HIPAA | Protected health information (PHI) security | End-to-end encryption, audit trails |

| SOC 2 | Controls for data security, availability | Regular file access reviews, robust logs |

Table 4: Regulatory requirements in file processing. Source: Original analysis based on LinkedIn, 2024, Toxigon, 2024

Non-compliance isn’t just a fine or a slap on the wrist. It can lead to lost contracts, public embarrassment, and even jail time for responsible executives.

Ethics: when automation crosses a line

File automation isn’t just a technical question—it’s an ethical minefield. Automated “delete all” rules have vaporized vital evidence. AI misclassification has denied patients essential care. In one notorious case, a global firm auto-archived whistleblower reports, preventing legal review.

"Just because you can automate a process doesn’t mean you should." — Erin, compliance officer (LinkedIn, 2024)

The ethical playbook:

- Always keep critical “human-in-the-loop” checkpoints.

- Audit automations regularly for unintended consequences.

- Document decision criteria for what gets automated—and why.

If you can’t explain your file automations to a skeptical outsider, you’re probably over the line.

Workflow optimization: smarter, not harder

Spotting inefficiencies before they cost you

The silent killer of enterprise productivity isn’t always a system crash—it’s the slow bleed of inefficiency. Hidden delays in file approvals, redundant tasks, manual data entry: over time, these can choke your bottom line. According to Inc.com, 2024, inefficiencies in file processing account for up to 23% of lost working hours in Fortune 1000 companies.

- Unconventional uses for file processing that maximize ROI:

- Deploy file automation to auto-categorize incoming RFPs, speeding up sales cycles.

- Use metadata extraction to power advanced analytics for customer insights.

- Link file processing with task management (like futurecoworker.ai) to auto-generate action items from incoming documents.

- Automate archiving of outdated files to reduce backup costs.

- Use intelligent routing to ensure high-priority files hit decision-makers’ inboxes—never languishing in shared folders.

Spotting and slashing these inefficiencies isn’t optional; in competitive industries, it’s existential.

Step-by-step: building a resilient file processing system

Ready to overhaul your workflow? Here’s the blueprint for a resilient, future-proof file processing system:

- Audit existing workflows: Map every file’s journey, noting all manual and automated steps.

- Identify and quantify bottlenecks: Use logs, employee feedback, and time-tracking data.

- Define outcome metrics: What does “success” look like—speed, accuracy, auditability?

- Choose the right tools: Select for scalability, interoperability, and ease of use.

- Implement in stages: Pilot automations in low-risk areas, then scale.

- Train everyone: From IT to front-line users; no one is exempt.

- Monitor and adapt: Review logs, error rates, and feedback weekly.

- Document everything: Build a living playbook of policies and exceptions.

Common mistakes to avoid:

- Over-automating without safeguards.

- Ignoring the need for user training.

- Failing to track post-implementation ROI.

Get these steps right, and your file processing goes from a liability to a competitive weapon.

When to call in the pros (and what they won't tell you)

There comes a tipping point where DIY fixes and internal hacks just don’t cut it—usually when workflows straddle multiple teams, geographies, or compliance regimes. That’s when outside help—consultants or specialized platforms like futurecoworker.ai—becomes essential.

- Hidden benefits of file processing experts won’t tell you:

- They can spot systemic issues you no longer notice due to “routine blindness.”

- Their outside perspective accelerates buy-in from skeptical stakeholders.

- They bring tried-and-tested frameworks for scaling best practices.

- Their documentation is often more robust (and auditor-friendly) than homegrown solutions.

When evaluating vendors and tools, demand transparency, references, and real ROI metrics—not just a slick demo. And stay skeptical: even the best tools are no substitute for internal vigilance.

The human factor: collaboration, culture, and resistance

Why people still matter in file processing

Despite the relentless march of automation, people remain the arbiters of context, judgment, and creativity. Human oversight is what catches the file labeled “urgent” that’s actually a phishing attempt, or the client contract with one line of small-print hell. A 2024 survey by mindgrow.io found that 67% of major file processing failures could have been prevented by attentive human review.

Case in point: In a global campaign, a single creative flagged a copyright issue missed by three rounds of automated checks. Yet for every human breakthrough, there’s a human error—“accidentally” deleting an entire project folder, or attaching the wrong version to a mission-critical email.

Striking the right balance between automation and human skill is what separates the survivors from the casualties in file processing.

Culture wars: shadow IT and rogue workflows

When official processes don’t keep up, shadow IT rises from the ashes: employees using unauthorized tools or backchannel workflows to “get things done.” This breeds risk, fragmentation, and security nightmares. According to Inc.com, 2024, shadow IT is responsible for 32% of enterprise data leaks.

- Red flags for spotting shadow IT:

- Increasing use of unapproved file-sharing platforms.

- Frequent “workarounds” for file transfer or format conversion.

- Sensitive files showing up in personal cloud accounts.

- Teams bypassing IT for mission-critical projects.

- Security audits revealing unknown software integrations.

Fostering a culture of responsible innovation means inviting feedback, rapidly iterating official tools, and being transparent about process changes. Suppress shadow IT at your peril; instead, channel its energy toward constructive evolution.

Training, onboarding, and the myth of the 'smart user'

Most file processing disasters aren’t caused by bad actors—they’re the result of overestimating user tech literacy. The myth of the “smart user” leads to corners being cut in onboarding and training. The fallout? Routine mistakes, missed updates, and catastrophic misclicks.

Priority checklist for effective training:

- Map user personas: Tailor training for executives, admins, and front-line staff.

- Focus on real-world scenarios: Use case studies, not just slide decks.

- Reinforce the why: Explain the impact of good (and bad) file practices.

- Keep it continuous: Make updates part of regular workflow, not one-off events.

- Encourage feedback: Build a culture where users flag process pain points.

Bridging the gap between technical and non-technical staff isn’t a one-off project—it’s an ongoing investment. The best systems are only as good as the people who use them.

Real-world disasters (and what they teach us)

When file processing goes wrong: three cautionary tales

There’s no shortage of “I can’t believe we did that” stories in file processing—some public, many buried deep in corporate shame files. Three stand out:

- The lost contract: A global retailer’s final signed deal vanished in a misnamed folder. The deal fell through; the company lost millions.

- Security leak: An unencrypted file sent via shadow IT exposed thousands of customer records. The breach went undetected for months, triggering regulatory fines and reputation damage.

- PR nightmare: An out-of-date press release, mistakenly attached and auto-sent to media contacts, sparked a social media uproar and a sharp dip in stock value.

| Incident | Cause | Consequence | Recovery Steps |

|---|---|---|---|

| Lost contract | Poor naming, lack of audit | $2M lost revenue | Manual search, policy overhaul |

| Security leak | Shadow IT, no encryption | Regulatory fine, lost trust | Forensics, new encryption |

| PR nightmare | Outdated file, auto-send | Reputation hit, stock drop | Public apology, automation fix |

Table 5: File processing disaster breakdown. Source: Original analysis based on Inc.com, 2024, mindgrow.io, 2024

Most of these disasters could have been prevented by basic oversight, better naming conventions, or proactive monitoring.

Learning from near-misses: almost-disasters and the fixes that worked

Every disaster has a close cousin: the near-miss—averted through quick thinking or smart protocols. One operations lead at a logistics firm recounts a last-minute save:

"We were one click away from losing millions." — Dana, operations lead (mindgrow.io, 2024)

Best practices from these episodes:

- Always back up before large-scale automated actions.

- Build in “undo” windows for critical workflows.

- Maintain real-time alerts for abnormal activity.

- Encourage a “stop and recheck” culture for batch operations.

These aren’t just IT lessons—they’re organizational resilience in action.

Building resilience: how to disaster-proof your workflows

A robust file processing system isn’t a set-and-forget project—it’s a living, breathing organism. Strategies for resilience:

- Incident response: Map out who does what, when things go wrong.

- Regular drills: Simulate disasters to test your response.

- Continuous improvement: Iterate processes based on real failures and user feedback.

- Documentation: Keep playbooks updated and accessible.

- Post-mortems: Analyze every incident—no matter how small—for root causes.

A culture of learning from failure is your best insurance against the next blackout.

Future trends: where file processing is headed (and what to do now)

Emerging tech: quantum, blockchain, and beyond

If you believe the hype, quantum computing and blockchain are the future of file processing. In reality, bleeding-edge innovations like quantum encryption are still experimental, and blockchain’s immutability can create as many problems as it solves—especially when files need to be altered or deleted.

The smart move? Monitor these trends, but don’t bet your business on unproven solutions. Focus on proven tech that scales—cloud, robust metadata, and hybrid AI-human workflows.

The rise of intelligent enterprise teammates

AI-powered coworkers, such as futurecoworker.ai, are changing how enterprises interact with file processing and collaboration. Instead of juggling dozens of apps, teams can leverage intelligent agents embedded in their core workflows—summarizing threads, auto-organizing attachments, and surfacing action items in real time.

Three examples of real-world AI integration:

- A marketing agency uses AI to auto-tag and assign incoming client briefs to the right teams, reducing project kickoff time by 40%.

- A finance firm integrates AI to auto-generate compliance checklists from every incoming report, slashing manual review by 30%.

- Healthcare providers deploy AI agents to instantly flag missing patient consent forms, cutting down administrative errors and improving patient satisfaction.

Adoption isn’t always seamless—common challenges include user resistance, unclear ROI, and integration headaches. Overcoming these means investing in change management, clear communication, and choosing tools that complement, not replace, human expertise.

How to future-proof your file processing strategy

Staying adaptable isn’t a luxury—it’s survival. A forward-thinking strategy means continuous assessment and improvement.

- Assessment: Regularly audit your file workflows—don’t assume what worked last year is still optimal.

- Agility: Build flexible processes that allow rapid pivots to new regulations or business needs.

- Upgrade tools: Stay current with proven platforms and retire legacy systems before they bite you.

- Ongoing education: Keep teams trained on new threats, best practices, and technology shifts.

Bridge these actions to your broader digital transformation goals. For more on optimizing enterprise collaboration, review resources at futurecoworker.ai.

Beyond the obvious: adjacent issues and deeper debates

Shadow IT: the silent saboteur of file processing

Unauthorized tools and duct-tape solutions are the silent saboteurs of enterprise file processing. While they may solve short-term pain, the long-term result is chaos: fragmented data, uncontrolled risks, and compliance black holes.

Tools and workflows adopted without official approval or oversight. Examples include using personal cloud accounts for work files or unsanctioned automation scripts.

Officially approved, IT-governed processes. Examples: enterprise document management systems, authorized file-sharing platforms.

To regain control, organizations must balance security with usability. Clamp down too hard, and innovation dies; ignore shadow IT, and disaster soon follows.

The ethics of digital collaboration: who owns your files?

Ownership of digital files is murky—especially in collaborative environments. Is it the creator, the company, or everyone who touches the file?

- Who has ultimate accountability for file integrity?

- What happens when files leave the organization?

- How are contributors credited for their work?

- How are privacy and consent maintained in shared workflows?

- Who decides when a file should be deleted—or preserved?

Transparent policies, clear access logs, and regular reviews are critical for ethical digital collaboration.

Why file processing isn’t just an IT problem

File processing touches everyone—sales, marketing, HR, operations. When it fails, the pain is company-wide.

"When file processing fails, everyone pays the price." — Alex, project manager (Inc.com, 2024)

To build true accountability:

- Make file management a shared KPI across teams.

- Tie workflow improvements to business outcomes.

- Involve non-technical staff in process mapping and tool selection.

A holistic, company-wide approach is the only way to turn file processing from a pain point into a competitive advantage.

Your playbook: mastering file processing in 2025 and beyond

Quick reference: your 12-point file processing checklist

Every organization needs a go-to checklist for file processing essentials:

- Map all file workflows, end-to-end.

- Standardize naming conventions and folder structures.

- Implement robust metadata tagging.

- Automate routine processing but retain human review for exceptions.

- Secure files with encryption and strict access controls.

- Log all file access and changes.

- Regularly review and update compliance policies.

- Provide continuous training for all users.

- Monitor shadow IT and unauthorized workflows.

- Schedule regular audits and drills.

- Maintain clear documentation and playbooks.

- Build feedback loops for ongoing improvement.

Refer to this playbook before any major workflow change—and revisit it quarterly to stay ahead of new threats and opportunities.

Glossary: file processing terms you need to know

If you want to navigate the file processing minefield, you need to speak the language. Here’s what matters:

- Metadata: Data describing other data (e.g., author, creation date)—the “file fingerprint.”

- Ingestion: Entry point for files into a system.

- Transformation: Changing file format, structure, or content.

- Distribution: Routing files to their intended destination.

- Retention policy: Rules governing how long files are kept.

- Audit trail: Log of all file actions—crucial for security and compliance.

- Shadow IT: Unapproved tools or workflows.

- Encryption: Coding files to prevent unauthorized access.

- Version control: System for tracking changes to files over time.

- Hybrid workflow: Combination of automated and manual file processing.

Stay fluent, and you’ll stay in control—even as the technology landscape shifts.

Takeaways: brutal truths to act on now

You’ve heard the horror stories and seen the numbers. Here are the brutal truths—no sugarcoating, just action:

- Ignoring file processing is actively risking disaster.

- Automation without oversight is a ticking time bomb.

- Compliance is not a checkbox—it’s a moving target.

- Shadow IT will sabotage you if you let it fester.

- Human expertise is your last line of defense—and your best source of innovation.

Don’t let complacency or cultural inertia make your file processing the weak link in your enterprise. The unvarnished truth is simple: Those who master it thrive. Those who don’t, pay the price.

Sources

References cited in this article

- Brutal Business Truths Every Entrepreneur Must Face, mindgrow.io(mindgrow.io)

- 15 Brutal Truths Entrepreneurs Don't Want to Admit, Inc.com(inc.com)

- 12 Brutal Truths for Employers, LinkedIn(linkedin.com)

- File Metadata 101: Why It Matters and How to Use It, Toxigon(toxigon.com)

- The Evolution of Document Processing, Qbotica(qbotica.com)

- From Filing Cabinets to AI, Iron Mountain(ironmountain.com)

- Modern Data Processing, Snowflake(snowflake.com)

- Dagster Guides(dagster.io)

- Common File Format Overview, ScienceDirect(sciencedirect.com)

- Understanding Common File Formats, FileFlip(fileflip.app)

- Document Automation Clears Workflow Bottlenecks, Tomorrow's Office(tomorrowsoffice.com)

- 8 Workflow Bottlenecks, LucidLink(lucidlink.com)

- Artificial Intelligence, Real Concerns: Hype, Hope and the Hard Truth About AI(securityintelligence.com)

- SC Media: Battling Ransomware in the Age of AI(scworld.com)

- The Future of Data Processing: How AI Changes the Game, Emerge Digital(emerge.digital)

- AI Document Processing, Addepto(addepto.com)

- How File Transfer Automation Helps Solve Operational Efficiency, Security and Compliance Challenges, OPSWAT(opswat.com)

- Compliance Automation Guide, CyberSierra(cybersierra.co)

- Beyond Encryption: Advanced Techniques for File Security, Kiteworks(kiteworks.com)

- Is Password-Protecting a Document Secure?, Beyond Encryption(beyondencryption.com)

- Workflow Optimization Techniques, Optiow(optiow.com)

- Work Smarter, Not Harder, Officium.uk(officium.uk)

- Implementing SRE: Step-by-Step Guide, Medium(amitchaudhry.medium.com)

- Designing a File Processing Workflow, triglon.tech(triglon.tech)

- The Human Factor in M&A, CCY(ccy.com)

- Ergonomics and Human Factors, MDPI(mdpi.com)

- Common User Onboarding Myths Busted, ProductLed(productled.com)

- Top 4 Myths of Remote Onboarding, Apty(apty.io)

- Resilience Unleashed: Lessons Learned From Ransomware Disasters, Disaster Recovery Journal(drj.com)

- 7 Data Disasters & Lessons Learned, Rewind(rewind.com)

Ready to Transform Your Email?

Start automating your tasks and boost productivity today

More Articles

Discover more topics from Intelligent enterprise teammate

File Organizer 2.0: Build an AI System That Kills Digital Chaos

File organizer secrets unlocked: Discover edgy, expert-backed strategies to master file chaos, boost productivity, and future-proof your workflow. Start now.

File Organization That Actually Works: From Digital Chaos to Control

Discover insights about file organization

File Manager Jako Ai‑copilot: Od Cyfrowego Chaosu Do Kontroli

File manager just got personal: discover bold strategies, debunk myths, and transform digital chaos into calm. Take control—don’t let files own you.

File Management Is Broken — the New Rules for a Chaos-Proof Workspace

File management is broken—discover the hard truths, hidden costs, and bold strategies to reclaim control. Read before your digital chaos becomes unfixable.

File Helper Vs. File Chaos: the AI Upgrade Your Team Needs

Break free from digital disorder with bold, expert-backed tactics, gripping stories, and the latest AI insights. Unleash your team’s potential—start now.

File Help, File Chaos, Lost Millions: Rethink Your Digital Life

In a world that spins on the axis of digital files, the illusion of control can shatter in a heartbeat. “File help” isn’t just about recovering a lost

File Handling Is Your Biggest Enterprise Risk (and Advantage)

Discover insights about file handling

File Handler W 2026: Cichy Punkt Awarii Twojego Enterprise

Think you know how your enterprise files move, morph, and mingle? Think again. The humble file handler—a background process you’ve probably never seen—has

File Expertise Is Your Real Collaboration OS in the Age of AI

File expertise isn’t just file management—discover the hidden realities, pitfalls, and power moves behind modern enterprise collaboration. Take control now.