Assistant Fix That Lasts: Turning Broken AI Coworkers Into Assets

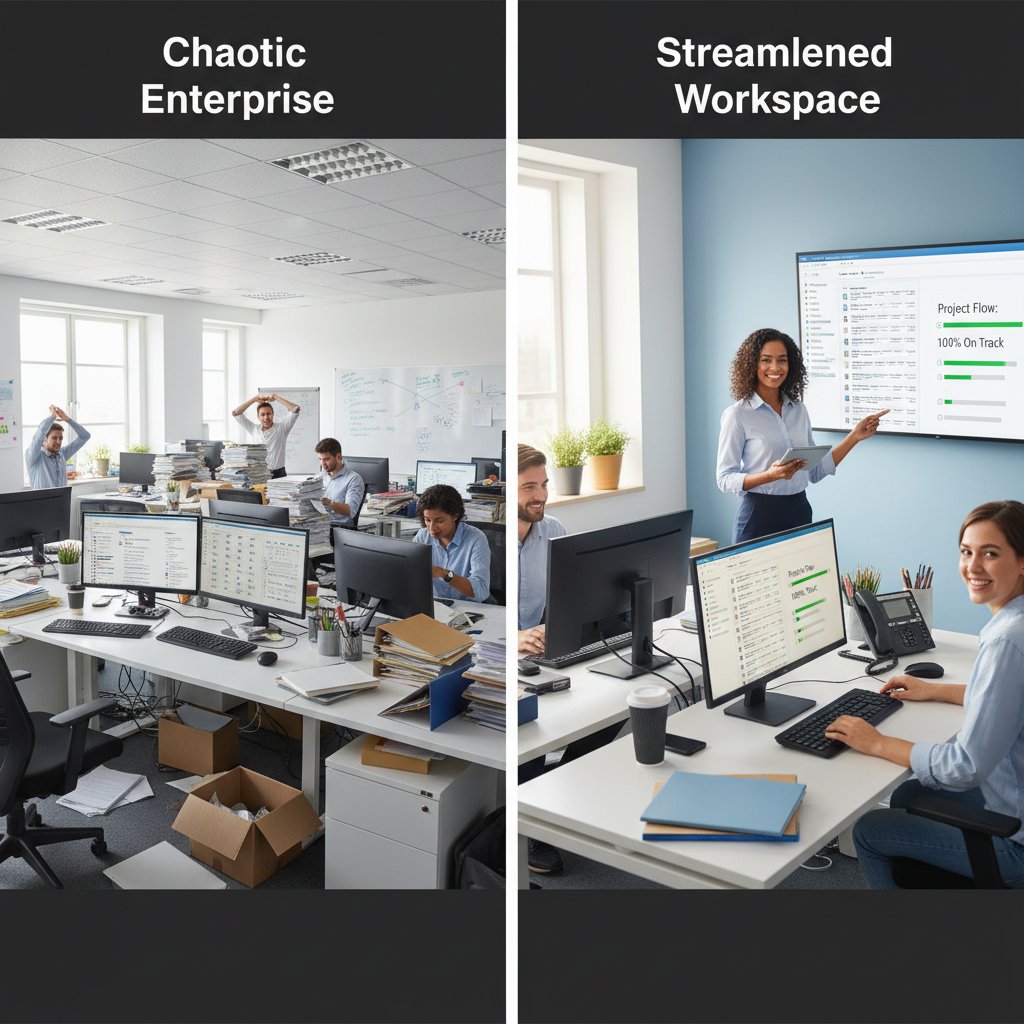

Picture this: a sleek office brimming with ambitious teams, shimmering screens, and the latest digital assistant humming in the background. Suddenly, your AI teammate stutters. Emails pile up, deadlines vanish into the ether, and panic spreads. You reach for the reset button—because that’s what we’ve been trained to do. But in the enterprise world, the “assistant fix” isn’t a magic bullet. It’s an ongoing battle with hidden tech debt, psychological comfort zones, and a relentless tide of half-baked solutions. This article dives deep beneath the glossy surface of digital productivity to expose the brutal truths, hidden costs, and real strategies for making AI coworkers actually work. If you think a simple reboot will rescue your workflow, buckle up. The only way out is through—armed with ruthless honesty, smart diagnostics, and a willingness to challenge everything you think you know about AI in the workplace.

Why ‘assistant fix’ is more than pressing reset

The illusion of quick fixes

There’s an irresistible allure to the big red “reset” button—a promise of instant clarity and restored order. But in enterprise AI, quick fixes almost never deliver. Organizations crave speed. When the assistant glitches, the reflex is to reboot, patch, or update. This chase for immediacy offers a psychological salve—a sense of control amid chaos. Yet, as recent research from the Microsoft Work Trend Index, 2024 emphasizes, 75% of global knowledge workers now leverage generative AI, doubling adoption in just six months. The pace is blistering, but so is the risk of treating deep-rooted issues with superficial solutions.

The comfort of “just fixing it” lies in its simplicity. It’s a ritual—one that signals action, even if it’s only surface-deep. But as the digital workspace grows more intricate, the gap between perception and reality widens. “Most AI failures start with wishful thinking, not code,” says Jordan, an industry veteran. Too often, a reboot feels like progress, but in reality, it quietly piles up technical debt, masking systemic weaknesses until the next failure erupts.

Superficial fixes create invisible liabilities. Each time a model is patched without tracing the true cause, hidden problems fester. These quick solutions breed reliance on temporary relief, not sustainable performance. The result? A fragile, brittle system—always one glitch away from chaos.

What’s really going wrong under the hood

AI assistants are not monoliths—they’re sprawling constellations of data pipelines, integrations, user behaviors, and machine learning models. When something breaks, the “symptom” is rarely the disease. Failure can stem from outdated models, context drift, corrupted training data, unpatched bugs, authentication errors, or even organizational habits.

| Failure Mode | Frequency (%) | Typical Business Impact |

|---|---|---|

| Data integration error | 34% | Missed deadlines, incorrect task assignments |

| Model drift/context failure | 22% | Irrelevant suggestions, loss of trust |

| Authentication/permissions bug | 19% | Blocked workflows, security incidents |

| User input/formatting error | 15% | Task loss, communication breakdown |

| System update conflict | 10% | Downtime, feature loss |

Table 1: Statistical summary of common enterprise AI assistant failures.

Source: Original analysis based on Microsoft Work Trend Index, 2024, Forbes, 2024.

The difference between user error and system error often blurs. A team might blame an employee for “using the tool wrong,” but system logs tell a nastier story: context windows expired, APIs silently failed, or permissions were revoked after a minor password policy tweak.

A systematic process for identifying the underlying reasons for a failure, not just its immediate symptoms. Root cause analysis often requires cross-functional collaboration and in-depth log review.

Unresolved issues or hastily patched problems that accumulate over time, making future fixes slower, riskier, and costlier.

Widespread, recurring breakdowns that point to structural flaws in design, process, or governance—beyond single-user mistakes.

The true cost of downtime: more than lost minutes

A stalled assistant isn’t just a minor annoyance—it’s a productivity black hole. Each minute of downtime ripples across teams, eroding trust, killing momentum, and opening cracks for security breaches. According to Gallup, 2024, employee readiness to work with AI actually dropped by 6 percentage points in the past year, a silent signal of growing frustration with unreliable systems.

- Lost deals: Missed follow-ups and delayed responses can nuke big opportunities.

- Employee morale: Constant glitches grind down confidence and stoke burnout.

- Security gaps: Inconsistent fixes create backdoors for data leaks and unauthorized access.

- Reputational damage: Clients and partners notice when your digital tools collapse mid-project.

- Costly workarounds: Teams invent manual hacks that introduce new errors and inefficiencies.

Consider a global marketing firm that relied on an AI assistant for client communications. A delayed assistant fix led to a missed proposal deadline, costing the team a six-figure account and weeks of apology campaigns. The root problem? A superficial model reset that masked a deeper integration failure.

Common myths about fixing intelligent assistants

‘Just restart it’: the myth of the magic reboot

The idea that a simple restart cures all digital ills is deeply embedded in tech culture. In consumer gadgets, a reboot often works. But enterprise AI is a different beast: it’s not just “alive”—it’s stitched together from dozens of services, databases, and microservices. Rebooting wipes the slate clean for a moment, but doesn’t touch the undercurrents.

Repeated surface-level fixes are dangerous. They create a cycle of dependency where teams normalize dysfunction and stop asking hard questions. “If you’re rebooting every week, you’re not fixing—you’re surrendering,” says Casey, a lead engineer at a Fortune 100 company.

Consumer expectations don’t translate. At home, a glitchy assistant might be a brief annoyance. In the enterprise, every failure reverberates across schedules, contracts, and compliance. The difference in stakes is seismic.

Blaming the user: dangerous misdirection

Blaming the user is the oldest dodge in IT. It’s easy, fast, and usually wrong. Research from ADP Research, 2024 finds only 21% of companies have actually trained employees on AI assistants. Yet, when things fail, fingers point at the nearest human.

In reality, system logs reveal a harsher truth: a majority of assistant failures—upwards of 60% in some studies—stem from underlying technical or process problems, not user error.

- Frequent unexplained behavior changes

- Simultaneous failures across multiple users

- Issues following recent updates or policy changes

- Lack of transparent error messages or diagnostics

These are all red flags that the assistant fix needs to start with the system, not shaming the team. Transparent diagnostics—a clear audit trail of what happened and why—are essential for honest problem-solving.

Myth-busting: AI is ‘set it and forget it’

The fantasy of fully autonomous AI assistants is persistent—and deeply misleading. Real-world deployment is messy. Models drift, integrations break, and context windows expire. Without ongoing tuning, even the best assistant devolves into digital noise.

Reliability requires ongoing oversight. Teams that treat AI as “set and forget” soon find themselves besieged by ghosts in the machine: reminders that never come, tasks that vanish, and meetings that self-destruct.

- Using assistant fix as a band-aid for broken integrations

- Relying on default models without retraining for business context

- Ignoring security patch notifications until a breach occurs

Root cause analysis: the only assistant fix that lasts

How to perform a real root cause analysis

A proper assistant fix begins with ruthless honesty. Root cause analysis is about peeling back layers—no shortcuts, no ego. Here’s how to do it:

- Reproduce the failure: Have the original user walk you through exactly what happened.

- Gather system logs: Pull diagnostic data from the assistant, integrations, and infrastructure.

- Map dependencies: Identify all moving parts—APIs, databases, user accounts, permissions.

- Isolate variables: Test components independently to rule out false positives.

- Trace upstream and downstream impacts: Don’t stop at the first symptom. Model how the failure rippled out.

- Document and communicate: Record every finding, fix, and mitigation for future reference.

- Verify and monitor: Confirm the fix holds over time—don’t celebrate too soon.

Using diagnostic logs and system reports is non-negotiable. They illuminate hidden conflicts, expired tokens, or stealthy bugs that manual inspection can’t catch.

| Fix Approach | Time to Implement | Effectiveness | Recurrence Rate |

|---|---|---|---|

| Quick reset | 2 minutes | Low | High |

| Model retraining | 1-2 days | Medium | Moderate |

| Deep root cause fix | 2-7 days | High | Low |

Table 2: Comparison of superficial vs. deep-dive fixes for AI assistants.

Source: Original analysis based on BBC Worklife, 2023, Microsoft Work Trend Index, 2024.

Case study: a fix that stuck—and one that didn’t

At a mid-sized finance firm, the IT team fell into a cycle of weekly restarts whenever the assistant lagged or misrouted emails. Productivity flatlined. Each patch papered over a deeper issue: outdated OAuth tokens causing silent authentication failures during high-traffic periods.

Contrast this with a marketing agency that invested in root cause analysis. They mapped dependencies, retrained models for their specific workflows, and institutionalized documentation. Not only did outages disappear, but user trust rebounded and project turnaround improved by 40%.

Tools and frameworks for sustainable fixes

Sustainable assistant fixes demand serious tools. Diagnostic platforms like ELK Stack, New Relic, and Splunk help monitor, analyze, and visualize failures in real-time. Integration checkers and permission audit tools are indispensable.

- Inventory all integrations and dependencies.

- Establish diagnostic log pipelines.

- Automate alerts for failure patterns.

- Schedule regular reviews of assistant performance.

- Create a centralized knowledge base for fixes and lessons learned.

Futurecoworker.ai has emerged as a thought leader in intelligent enterprise teammate troubleshooting, providing resources and expertise that go beyond superficial repairs. Documenting every fix isn’t bureaucracy—it’s survival. Shared knowledge inoculates the team against repeat failures.

The human factor: why assistant fix is a culture problem

Workplace habits that sabotage AI assistants

No assistant is immune to the quirks of human nature. Recurring workplace habits—often invisible—can sabotage digital coworkers. At a rapidly scaling tech startup, teams ignored update prompts, used cryptic email subject lines, and bypassed official workflows to “save time.” The result? The assistant’s context engine collapsed under ambiguity, triggering a domino effect of missed tasks.

- Sending emails with inconsistent formats

- Ignoring update and security prompts

- Sharing login credentials to “get things done faster”

- Skipping documented workflows in favor of ad-hoc solutions

Anecdotes like these are everywhere. At one startup, a single team’s creative “hacks” led to a week-long outage, as the assistant’s logic became hopelessly tangled. The lesson: culture eats code for breakfast.

Training, trust, and transparency

Ongoing user education isn’t a luxury; it’s a lifeline. According to ADP Research, 2024, only a fifth of enterprises offer formal training on AI use—no wonder trust is eroding. The gap between user expectation and actual assistant behavior grows with every undocumented fix.

“Transparency isn’t just a feature; it’s the foundation,” notes Taylor, a senior product manager. The most resilient teams share not only solutions, but the stories behind their breakdowns, making every assistant fix a collective lesson—not a secret whispered in IT backrooms.

When resistance isn’t futile: pushing back on bad AI

Sometimes, the smartest move is to push back. At a healthcare provider, users flatly rejected a new assistant rollout that ignored frontline workflows. The resulting feedback loop forced leadership to realign priorities—ultimately, the assistant relaunch cut administrative errors by 35%.

- Encourage open reporting of assistant issues without blame.

- Review and act on feedback in cross-functional teams.

- Document both failures and successful fixes.

- Celebrate incremental improvements—not just major overhauls.

When resistance is paired with collaboration, the whole organization benefits—and leadership finally gets the buy-in needed for systemic change.

Technical deep dive: what breaks and how to fix it for good

Common technical failure points

Technical fragility is the shadow side of AI convenience. The most frequent breakdowns are rarely catastrophic—they’re cumulative.

| Failure Point | Typical Impact | Frequency |

|---|---|---|

| API connectivity loss | Assistant can’t access data or trigger actions | High |

| Authentication errors | Users locked out, workflows stalled | High |

| Data parsing failures | Garbled tasks, missing context | Medium |

| Integration timeouts | Lost emails, unsynced updates | Medium |

| Permission misconfigurations | Security risks, workflow bottlenecks | Low |

Table 3: Technical failure points and their real-world impacts for AI assistants.

Source: Original analysis based on Microsoft Work Trend Index, 2024, BBC Worklife, 2023.

Error logs are your friends. Visual breakdowns—timestamped failures, request payloads, and authentication traces—reveal patterns invisible to the naked eye.

Advanced troubleshooting: beyond the basics

Advanced assistant fix protocols go several layers deeper:

- Run API endpoint diagnostics with real and mock requests.

- Audit user permissions and authentication chains.

- Trace error propagation across microservices.

- Integrate third-party monitoring for real-time anomaly detection.

- Document each fix and cross-reference with past issues.

Integrating third-party monitoring tools adds an early-warning layer—flagging subtle drifts and edge-case breakdowns before they become avalanches. Common mistakes? Skipping log reviews, ignoring “minor” errors, and patching in production without a rollback plan.

Security, compliance, and the hidden dangers

Every fix introduces new risks. Patch too quickly, and you might expose sensitive credentials, open shadow IT tunnels, or break compliance with industry standards. AI assistant repairs can inadvertently leave data exposed or workflows unlogged.

- Exposed credentials in debugging logs

- Shadow IT through unauthorized integrations

- Loss of audit trails after hasty reboot cycles

- Untracked changes to permissions or user roles

Compliance demands—especially in finance, healthcare, or education—mean every fix must be documented and, ideally, peer-reviewed. Balancing agility with governance is the only way to make assistant fixes stick without burning down the house.

DIY or enterprise? Choosing your assistant fix strategy

When do-it-yourself goes wrong (and right)

DIY assistant fixes can be empowering—or catastrophic. At a fintech startup, a lone engineer “hacked” a broken integration overnight, only to trigger a data leak that took months to unwind. At another agency, a savvy admin documented every tweak, built a playbook, and saved the company from four-figure support bills.

- The issue persists across multiple users and teams.

- Security or compliance is at stake.

- The platform is mission-critical to business outcomes.

- Documentation is missing, outdated, or nonexistent.

Community forums and open-source projects are treasure troves—if you know how to separate signal from noise.

Enterprise-grade fixes: what you pay for

Enterprise support isn’t just about hand-holding—it’s about dedicated expertise, service-level agreements (SLAs), and full-spectrum integration.

| Feature | DIY Solution | Enterprise Solution |

|---|---|---|

| Cost | Low (time investment) | Higher (subscription/support) |

| Support | Community/none | 24/7, expert-backed |

| Scalability | Limited | High, built for growth |

| Security/compliance | Inconsistent | Audited, documented |

| Documentation | Rare | Extensive, always updated |

Table 4: DIY vs. enterprise assistant fix solutions—features and trade-offs.

Source: Original analysis based on BBC Worklife, 2023.

Having a dedicated support team can mean the difference between a five-minute fix and a five-week nightmare. For enterprise-grade guidance, futurecoworker.ai stands out as a reliable resource trusted by high-performing organizations worldwide.

The hybrid approach: best of both worlds?

Sometimes, the smartest play is a hybrid model—empowering power users while keeping enterprise support on speed dial.

- Build a knowledge-sharing community within your organization.

- Define clear escalation protocols for critical failures.

- Invest in both open-source tools and premium support.

- Regularly review what’s working and iterate fast.

A scaling startup might begin with DIY, then layer in enterprise support as complexity grows. The key is knowing when to transition—before small failures metastasize into existential threats.

The future of assistant fixes: self-healing systems and automation

What’s real and what’s hype in self-healing AI

Self-healing AI is the buzzword of the year. Vendors promise assistants that “repair themselves”—but current reality is more modest. While automated error correction and anomaly detection are on the rise, true autonomy is elusive.

According to Microsoft Work Trend Index, 2024, 75% of knowledge workers already use generative AI, with 37% of marketing teams using AI for data-driven recruitment and coordination. Yet, even the most advanced platforms require human oversight for complex breakdowns.

“Automation is only as smart as its last fix,” says Morgan, a lead AI engineer. Self-healing is a tool—not a substitute for ethical, informed maintenance.

Emerging trends: AI that learns from its own failures

Machine learning feedback loops are revolutionizing assistant reliability. New models now analyze past failures, predict triggers, and recommend preemptive action.

- Predictive analytics flag likely failure points based on historical data.

- Error pattern recognition enables smarter alerting and prioritization.

- Crowd-sourced fixes (from user communities) accelerate innovation and patch cycles.

Imagine an enterprise where the assistant not only recovers from glitches, but adapts processes in real time—reducing manual oversight and amplifying productivity.

What businesses should do now to prepare

Preparation is everything.

- Audit current assistant deployments and document all integrations.

- Establish feedback loops between users and development teams.

- Invest in platforms with transparent diagnostic tools and regular updates.

- Create an ongoing training pipeline for users at all levels.

Waiting for perfection is a luxury few can afford. Organizations that invest in resilient, well-documented assistant ecosystems gain not just reliability, but a culture of continuous improvement.

Real-world impact: assistant fix stories that changed the game

Enterprise disaster and recovery: lessons learned

A major logistics company once watched its AI assistant collapse mid-launch, freezing warehouse schedules for 48 hours. Recovery required not just a technical fix, but a total overhaul of escalation protocols, documentation, and staff training.

- Always audit dependencies before rolling out new features.

- Document every fix and share lessons learned company-wide.

- Prioritize communication—panic is the true enemy of recovery.

Startups and scale-ups: agility vs. stability

Startups patch fast. Scale-ups invest in stability. A SaaS company rushed a patch that solved a UI bug but quietly killed API authentication for dozens of key clients. Meanwhile, a rival scale-up spent a week on root cause analysis, launching a thorough fix that held up for months.

Balancing agility with reliability is a dance. Sometimes, a fast patch is necessary; other times, patience and deep analysis win the race.

User testimonials: what fixing the assistant actually felt like

“The day our assistant worked was the day my stress dropped by half,” says Riley, an operations lead at a midsize firm. Other users echo similar relief—describing renewed trust, lower burnout, and a sense of finally being “back in control.”

"The day our assistant worked was the day my stress dropped by half." — Riley, Operations Lead, 2024

The emotional impact is real. Fixing the assistant isn’t just technical; it’s psychological. Productivity rebounds and teams rediscover the confidence to focus on what matters.

Beyond the fix: building a resilient assistant ecosystem

Proactive maintenance beats reactive chaos

Proactive management is insurance against the next meltdown. Reactive chaos is a tax on productivity—one paid in lost time, lost deals, and lost morale.

- Schedule routine assistant health checks.

- Review integration logs weekly.

- Rotate security credentials on a fixed timetable.

- Encourage users to report anomalies early.

A tech consultancy slashed downtime by 60% after instituting monthly assistant reviews—small investments with compounding returns.

Integrating assistants into the enterprise fabric

Embedding assistant fix protocols company-wide means aligning teams—IT, HR, operations, compliance, and end-users all play a role.

- IT: Owns diagnostics and infrastructure.

- HR: Connects assistants to onboarding and training.

- Operations: Translates business needs into workflows.

- Compliance: Audits and documents every fix and update.

Knowledge bases are critical. When fixes are archived and searchable, new hires ramp up faster and old hands avoid relearning painful lessons.

Future-proofing your AI: what to watch next

Staying ahead of the curve means watching for new threats and opportunities.

- Sudden spikes in error rates after updates

- User complaints without clear technical root causes

- Unusual log entries or failed API calls

- Declining user trust or engagement metrics

Key takeaway: assistant fix isn’t a one-off task—it’s an ongoing discipline. Organizations that embrace uncomfortable truths, invest in real solutions, and foster a culture of shared learning will outlast those hoping for a magic reboot.

Supplementary: adjacent issues and essential deep dives

AI assistant security: risks no one talks about

Unpatched fixes often create vulnerabilities—leaving credentials exposed, integrating rogue plugins, or bypassing audit trails. In one scenario, a botched assistant repair opened a portal for credential stealing malware, compromising sensitive HR data.

- Always rotate credentials after major fixes.

- Document all third-party integrations and audit them regularly.

- Keep audit trails intact, even during emergency fixes.

- Treat every fix as a potential security event.

Balancing usability and protection is key. If security gets in the way of productivity, users will find workarounds—often riskier than the original flaw.

What makes a ‘fix’ stick: leadership and accountability

Without leadership buy-in, even the best technical fixes fade. One multinational empowered every employee to flag assistant failures, resulting in a flood of small, manageable issues—instead of one catastrophic breakdown.

- Assign ownership for assistant performance.

- Regularly review and act on user feedback.

- Incentivize transparency—reward, don’t punish, problem-spotters.

- Publish quarterly reports on assistant health and reliability.

Accountability is resilience. The more teams share responsibility for assistant fixes, the less likely the next disaster.

Cultural resistance: why some teams never fix their assistants

Some teams cling to broken assistants out of habit, fear, or misplaced loyalty. Overcoming this inertia requires more than technical prowess.

- Offer incentives for reporting and solving assistant issues.

- Foster transparency by sharing post-mortems.

- Celebrate collective wins—fixes that save the day.

When teams see the assistant as a shared ally—not a mysterious, punitive overlord—the culture shifts, and real progress begins.

In the end, “assistant fix” is not a destination. It’s a journey—one that exposes the gap between wishful thinking and operational reality. It’s about ruthless honesty, robust analysis, and relentless collaboration. As the data shows, the enterprises that thrive are the ones that face these truths head-on, investing not in quick patches but in systemic solutions and a culture that values transparency, accountability, and shared learning. The next time your digital coworker falters, remember: the fix isn’t just technical—it’s cultural, behavioral, and uncomfortably human.

Ready to make your AI teammate actually work for you? The fix starts with you—and with choosing partners and platforms that treat “assistant fix” as a discipline, not an afterthought.

Sources

References cited in this article

- Microsoft Work Trend Index, 2024(microsoft.com)

- Forbes: Employees report AI increased workload(forbes.com)

- ADP Research: Worker Sentiment AI Impact(adpresearch.com)

- Gallup: AI in the Workplace(gallup.com)

- BBC: Panic and Possibility(bbc.com)

- Chrome Unboxed: Google Assistant Needs an Upgrade(chromeunboxed.com)

- Pocket-lint: Google Assistant Losing Features(pocket-lint.com)

- Google Blog: Assistant Update(blog.google)

- AI Multiple: AI Stats(research.aimultiple.com)

- Cognitive Research: Illusions & Skill Decay(cognitiveresearchjournal.springeropen.com)

- Exploding Topics: AI Statistics(explodingtopics.com)

- DW: Interactive AI 2024(dw.com)

- Georgia Tech: Ethical Issues in AI Assistants(news.gatech.edu)

- Forbes: Future of AI(forbes.com)

- Capital One Tech: AI Myths(capitalone.com)

- Tilburg.ai: AI Myths(tilburg.ai)

- Full Stack AI: Myths(fullstackai.co)

- LinkedIn: Impact of AI Assistants(linkedin.com)

- Medium: Rebooting AI(medium.com)

- McKinsey: State of AI 2024(mckinsey.com)

- EasyRCA: RCA Tools(easyrca.com)

- Atlan: RCA Guide(atlan.com)

- Codezup: AIOps RCA(codezup.com)

- CIO: AI Disasters(cio.com)

- Medium: AI Disasters 2024(medium.com)

- SitePoint: AI Coding Assistants(sitepoint.com)

- Nature: Sustainable AI(nature.com)

- EY: AI & Sustainability(ey.com)

- Forbes: Human Factor(forbes.com)

- LinkedIn: Culture Trends(linkedin.com)

- Skadden: AI Regulation 2024(skadden.com)

- MIT Tech Review: AI Regulation(technologyreview.com)

- Google DeepMind: Ethics(deepmind.google)

- Bitdefender: Cybersecurity Forecast(bitdefender.com)

- deepsense.ai: AI Predictions(deepsense.ai)

Ready to Transform Your Email?

Start automating your tasks and boost productivity today

More Articles

Discover more topics from Intelligent enterprise teammate

Assistant Employee or Coworker? the Power Shift Already Started

Assistant employee revolution is here. Uncover 7 shocking truths about intelligent AI coworkers, productivity, and workplace power shifts. Read before you hire.

Assistant Coordinator or Coworker? the Real ROI of AI Teammates

Discover how AI-powered teammates are transforming collaboration, crushing inefficiency, and revealing what no one tells you. Read before your next team project.

Assistant Clerk 2026: AI Coworker That Quietly Rewrites Your Job

Assistant clerk is reinventing office life. Discover the 7 shocking realities transforming enterprise teamwork in 2026. Learn what most guides won’t tell you.

Assistant Associate, Not Assistant: Your First Real AI Coworker

Assistant associate redefines enterprise teamwork—discover the edgy truth, surprising benefits, and how AI-powered teammates transform collaboration. Don’t get left behind.

Assistant Answer Is Your Next Coworker, Not a Smarter Chatbot

Discover insights about assistant answer

Assistant Aide As Coworker, Not Tool: 9 Risks Leaders Overlook

Assistant aide is reshaping teamwork. Uncover 9 raw truths, hidden risks, and practical strategies for leveraging AI-powered enterprise teammates—read before you decide.

Assist with Workplace: Why Your Next Coworker Is an AI Inbox

Assist with workplace solutions are redefining how teams collaborate. Discover the unexpected benefits, risks, and realities behind AI-powered enterprise coworkers.

Assist with Workflow by Killing Email Chaos, Not Adding Tools

Is your workflow sabotaging your sanity? If you've ever felt buried beneath a mountain of emails, missed deadlines, or that creeping sense that your team is

Assist with Time Without Burnout: How AI Coworkers Really Help

Assist with time like never before: discover hidden traps, bold solutions, and why most time-saving hacks fail. Read before you lose another hour.