Reasonable Assistant or Risky Coworker? the Real 2026 Trade‑offs

The shimmer of the “reasonable assistant” seduces organizations like a mirage in the productivity desert. Who wouldn’t want a digital teammate—an AI coworker fluent in your work rhythms, always on, never cranky, and (supposedly) immune to human error? But the real-world impact of these intelligent enterprise teammates is far less utopian than marketing promises suggest. In 2024, 78% of organizations reported AI use, but the gap between the myth of frictionless collaboration and the gritty truth of office reality is wider than ever. As the AI revolution surges, businesses are learning—sometimes the hard way—that not all digital coworkers are created equal, and that “reasonable” is a moving target. This article peels back the layers: what works, what fails, and how to survive the new era of AI-powered productivity. Before you hand over your workflow to a so-called reasonable assistant, read the brutal truths and surprising wins shaping 2025’s workplace.

The myth of the reasonable assistant: what we want vs. what we get

Why the AI coworker dream refuses to die

For decades, the fantasy of a frictionless AI teammate has haunted the imaginations of leaders and employees alike. Picture it: an invisible, omnipresent assistant, orchestrating deadlines, filtering chaos, and handling the grunt work with the poise of an all-knowing butler. The promise is intoxicating—effortless collaboration, zero admin overhead, and a kind of digital telepathy that keeps every project humming. Yet, the gulf between what we want and what we actually receive is cavernous. More often than not, those “intelligent enterprise teammates” underwhelm, tripping over context, missing nuance, and at times, introducing new headaches in the pursuit of efficiency.

This dream persists because the pain points—email overload, missed deadlines, mismanaged tasks—are real. According to the Stanford HAI AI Index 2025, AI-related incidents rose by over 56% in 2024, with the majority of organizations still wrestling with the basics of digital transformation. Still, the allure remains: that AI coworkers will someday deliver seamless, “reasonable” support, even as the daily grind proves otherwise.

Common misconceptions about intelligent enterprise teammates

Despite the hype, there’s a stubborn set of myths coloring our expectations of AI-powered assistants:

- AI can replace human judgment: The reality is that most AI coworkers struggle with context, subtlety, and conflicting priorities—qualities that define real-world decision-making.

- More automation equals more productivity: Research has shown that over-automating without strategic oversight often adds more work, not less, as employees scramble to compensate for errors or missed context.

- AI is unbiased and objective: In practice, AI reflects the data and decisions baked into it, making “neutrality” a seductive illusion.

- Once implemented, AI assistants run themselves: Ongoing monitoring, training, and adaptation are critical; otherwise, tool use decays and employees revert to manual workarounds.

- All AI tools work out of the box: Integration headaches, compatibility issues, and poor onboarding plague even the most advanced “digital coworkers.”

- AI will eliminate jobs, not create them: According to the World Economic Forum, while 85 million jobs may be displaced, 97 million new roles are emerging, often demanding new, AI-centric skills.

These misconceptions not only breed disillusionment but also create organizational blind spots—fostering overtrust in “reasonable assistants” who are anything but.

How the hype cycle distorts expectations

Every technology wave carries its own mythology, and AI is no exception. Marketing campaigns trumpet “game-changing” solutions, while media stories cherry-pick breakthrough moments—fueling a cycle of inflated expectation and inevitable letdown. It’s easy to believe your new AI teammate will rescue you from chaos, but as Alex, a seasoned tech lead, puts it:

“Every new platform promises a revolution; it rarely survives contact with real office chaos.” — Alex, tech lead (Stanford HAI, 2025)

These narratives matter. They shape buying decisions, color internal debates, and set teams up for disappointment when the reality of implementation hits. The cycle continues: as soon as one “reasonable assistant” underdelivers, another rises to take its place—each with a glossier promise, but few with a plan for the messy reality of day-to-day work.

How reasonable is ‘reasonable’? Defining the limits of AI collaboration

What makes an assistant reasonable—by design or by accident?

“Reasonable” is a slippery benchmark. Is an assistant reasonable because it was meticulously engineered to anticipate human needs, or because it accidentally aligns with our expectations through sheer luck? In practice, most AI coworkers demonstrate “reasonableness” only within narrow, well-defined lanes. Venture outside those boundaries—unusual requests, ambiguous priorities, cultural nuance—and the mask quickly slips.

Definition list:

- AI reasonableness: The degree to which an AI assistant delivers outcomes that match human expectations in context, balancing efficiency with judgment.

- Context awareness: The ability of an AI to adapt its behavior based on situational cues, user history, and evolving project goals—crucial for avoiding robotic, inappropriate responses.

- Collaborative intelligence: The synergy between human skills and AI automation, producing results greater than either side could achieve alone; often underappreciated in enterprise settings.

According to IBM AI Trends 2024-2025, successful AI adoption hinges on human oversight and the strategic blending of automated and manual workflows. This is where the “reasonable assistant” earns its stripes—or falls flat.

The boundaries of AI decision-making in the enterprise

Most so-called reasonable assistants have a comfort zone: repetitive, rules-based tasks like parsing emails, sending reminders, or scheduling meetings. But as demands grow more complex—negotiating priorities, resolving conflict, or making ethical calls—the cracks widen.

| Task Type | Is It Practical Today? | Is It Risky? | Is It Just Hype? |

|---|---|---|---|

| Email sorting | Yes | Low | No |

| Meeting scheduling | Yes | Medium | No |

| Task assignment | Yes (basic) | Medium | No |

| Lead generation | Yes (with oversight) | High | Yes (fully automated) |

| Conflict resolution | No | High | Yes |

| Ethical judgment | No | Very High | Yes |

| Summarizing threads | Yes | Medium | No |

| Cultural translation | Rarely | High | Yes |

Table 1: Practicality, risk, and hype for common AI coworker tasks.

Source: Original analysis based on Stanford HAI AI Index 2025 and IBM AI Trends 2024-2025.

The lesson? A “reasonable assistant” is rarely a generalist. Trusting your digital coworker with the wrong tasks can turn efficiency dreams into operational nightmares.

Why 'neutral' AI can still be a problem

The myth of neutrality is seductive. After all, who wouldn’t want an impartial, agenda-free teammate? But even the most advanced AI assistants are shaped by their data, design, and the biases of their creators. In enterprise environments, this can magnify blind spots, reinforce inequities, or spark confusion—especially when “neutral” outputs are interpreted as objective truth.

A “neutral” AI might miss context, ignore cultural nuance, or recommend actions that feel tone-deaf to human teammates. The result? Disengagement, mistrust, and a tendency to work around the digital coworker instead of with it.

The dirty secrets of AI adoption: what case studies really show

From pilot programs to daily grind: where most fail

It’s one thing to run a pilot project with eager volunteers and ample resources. It’s another to scale an AI assistant to hundreds or thousands of employees drowning in legacy systems, conflicting workflows, and shifting priorities. The graveyard of failed AI coworker projects is littered with ambitious pilots that fell apart in the trenches.

| Company Name | Pilot Success? | Full Rollout Outcome | Key Failure/Success Factors |

|---|---|---|---|

| TechCo Ltd. | Yes | Partial | Poor integration with legacy systems |

| HealthWorks Inc. | Yes | No | Employee resistance, training fatigue |

| FinEdge Solutions | No | N/A | Data quality issues, lack of oversight |

| MarketMakers Agency | Yes | Yes | Strong leadership, phased rollout |

| Medixa Healthcare | Yes | Partial | Compliance challenges, retraining |

Table 2: Success and pitfalls in AI assistant implementation.

Source: Original analysis based on Stanford HAI AI Index 2025 and Pew Research, 2025.

Common denominators for failure? Low-quality data, lack of IT readiness, employee pushback, and overreliance on tech as a magic bullet. According to Pew Research, 78% of employees have resorted to bringing their own AI tools to work—circumventing official channels because centralized solutions couldn’t keep up.

Stories they don’t tell: hidden costs and burnout

There’s a hidden ledger in every AI rollout that most glossy case studies skip: the cost of training, the hours spent debugging, and the toll on morale when teams are forced to learn yet another workflow. Instead of saving time, organizations often find themselves losing it—caught in an endless loop of adaptation fatigue.

“We spent more hours fixing our AI than it ever saved us.” — Priya, operations manager (Pew Research, 2025)

This adaptation tax rarely shows up in ROI reports but dominates the lived experience of employees. Add in the anxiety of job displacement and the frustration of clunky interfaces, and it’s clear: not all reasonable assistants are worth the sticker price.

When assistants become liabilities: real-world examples

The nightmare scenario? When your digital teammate creates more problems than it solves. From accidental data leaks to misrouted emails and botched client interactions, the risks are real—and the consequences can be severe.

- Rapid retraining: When errors spike, companies scramble to retrain users, sometimes pausing rollouts entirely.

- Process redesign: Teams must revisit and redesign workflows to ensure AI outputs are checked and validated.

- Manual overrides: Employees often revert to manual controls, undermining the very purpose of automation.

- Regular audits: Organizations implement frequent audits to catch AI-induced errors before they spiral.

- Clear escalation paths: Critical incidents force companies to clarify who’s responsible when AI goes rogue.

These steps are not mere footnotes—they’re survival tactics in a world where even a reasonable assistant can become a liability overnight.

Human + machine: what actually works (and why you rarely hear about it)

Hybrid workflows: the unsung hero of digital collaboration

The golden rule of AI coworkers? Humans and machines are better together—but only when the division of labor is explicit and strategic. The most effective teams don’t chase full automation. Instead, they build hybrid workflows where both sides play to their strengths.

- Humans handle edge cases: When context or nuance matters, humans step in—especially for client-facing, creative, or ethical decisions.

- AI powers the grunt work: Routine sorting, scheduling, and information extraction are safely delegated to digital teammates.

- Layered oversight: AI suggestions are vetted by team leads before execution, keeping mistakes in check.

- Transparent feedback loops: Employees can flag and correct AI errors, feeding improvements back into the system.

- Task triage: AI prioritizes tasks but lets humans make the final call on ambiguous situations.

This symbiosis isn’t flashy, but it works. According to IBM, organizations with blended workflows report higher satisfaction and measurable productivity gains—like the 25% speed boost seen in tech teams using solutions such as futurecoworker.ai.

Practical examples: reasonable assistants in the wild

The boardrooms and back offices of 2025 reveal a patchwork of approaches. In finance, AI-powered assistants have transformed client management, boosting response rates and reducing administrative churn by 30%. Healthcare providers lean on digital teammates for appointment coordination—cutting down errors by 35%. Marketing teams use AI to streamline campaign tracking, slashing turnaround times by as much as 40%.

But the real story is nuance: the best outcomes come from relentless iteration, clear boundaries, and the humility to accept that every “reasonable assistant” needs ongoing human care and feeding.

Mistakes to avoid when integrating AI coworkers

Even the most advanced teams trip up when onboarding digital coworkers. The common pitfalls? Rushing deployment, neglecting user feedback, and assuming that “reasonable” behavior is automatic. Here’s a step-by-step guide to a smoother rollout:

- Map your workflows: Identify where AI can help—and where it shouldn’t interfere.

- Involve users early: Get buy-in from the people who’ll work with the assistant daily.

- Pilot, then scale: Start small, fix bugs, and expand only when the kinks are worked out.

- Train and retrain: Ongoing education is essential—both for users and the AI itself.

- Monitor outputs: Set up dashboards or audits to catch errors before they do damage.

- Clarify escalation: Make it easy for employees to flag issues and revert to manual controls.

- Review regularly: Revisit goals and tweak the system as your needs evolve.

Ignore these steps, and even the most “reasonable” assistant can become an expensive headache.

Reasonable assistant, unreasonable expectations: breaking the cycle

How user fantasies sabotage outcomes

The biggest threat to any AI coworker project? Unrealistic hopes. Users project their frustrations and fantasies onto digital teammates, expecting miracle cures for endemic workplace chaos. When the assistant inevitably disappoints, morale tanks and adoption suffers.

The cycle is self-perpetuating: the more grandiose the promise, the steeper the crash when reality bites. The result isn’t just wasted time—it’s a deepening skepticism that can poison future innovation.

The real cost of chasing perfection

The quest for the perfect reasonable assistant is a money pit. Organizations pour resources into overengineered solutions, only to watch them falter under real-world complexity. As of 2024, U.S. private investment in AI hit $109 billion, but many projects burned through budgets on marginal returns.

| Year | Major Leap | Hype Peak | Crash Landing |

|---|---|---|---|

| 2016 | Email sorting bots | “Inbox Zero” movement | Poor context, user revolt |

| 2019 | NLP breakthroughs | “AI secretary” launches | Data privacy backlash |

| 2022 | Workflow automation | “No more admin” promises | Hidden costs, burnout |

| 2024 | GenAI assistants | “True collaboration” ads | Integration headaches |

Table 3: Timeline of AI assistant evolution—leaps, hype, and letdowns.

Source: Original analysis based on Stanford HAI AI Index 2025 and industry reporting.

How to recalibrate: setting grounded goals for AI teamwork

To get real value from a reasonable assistant, organizations must set practical goals, acknowledge limitations, and treat AI as a teammate—not a miracle worker. Open dialogue, regular check-ins, and a willingness to recalibrate are essential.

"Your assistant isn’t a miracle worker—it’s a teammate who needs boundaries." — Jamie, HR (quote based on industry consensus)

This mindset shift lays the groundwork for sustainable, positive impact—no hype required.

The ethical gray zone: trust, bias, and transparency in AI teammates

Who’s accountable when AI goes off script?

AI errors aren’t just technical blips—they’re ethical quandaries. When a reasonable assistant misroutes confidential data or makes a biased decision, who’s left holding the bag? The answer falls somewhere between user, designer, and organization.

Definition list:

- Algorithmic accountability: Assigning responsibility for AI-driven decisions, especially when outcomes have real-world consequences.

- Black box decisions: AI outputs that are opaque, making it hard to explain or audit how choices were made.

- Explainable AI: Systems designed to provide clear, understandable rationales for their recommendations—crucial for earning user trust.

Transparent governance—rooted in clear policies and regular audits—remains the gold standard for minimizing risk and maximizing confidence.

Bias, surveillance, and the myth of the neutral machine

Bias is the original sin of AI. From hiring tools that perpetuate discrimination to assistants that “learn” workplace prejudices, the risks are real and persistent.

- Hidden patterns: AI can reinforce invisible biases embedded in training data, amplifying systemic inequalities.

- Surveillance creep: Overzealous monitoring by AI coworkers raises privacy concerns—especially when tracking employee behaviors.

- Opaque logic: Lack of explainability makes it hard to challenge or correct problematic outputs.

- Feedback gaps: Without regular, transparent feedback, AI systems can spiral into self-confirming loops.

The lesson: “neutral” is never truly neutral, and vigilance is non-negotiable.

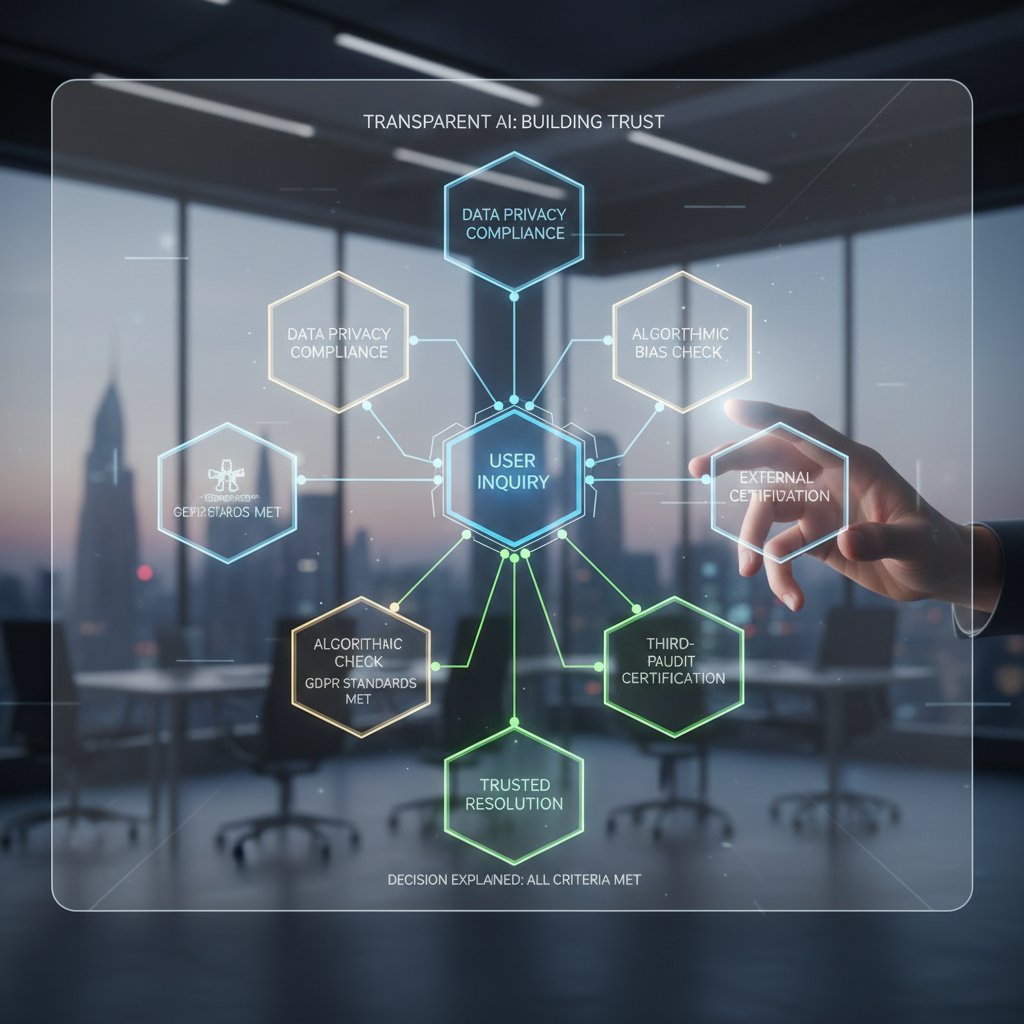

Building trust: transparency as competitive advantage

In a crowded market, transparency isn’t just a compliance checkbox—it’s a strategic differentiator. Leaders who open the black box, document decision logic, and solicit user input build loyalty and mitigate fallout from inevitable errors.

Platforms like futurecoworker.ai champion this approach, embedding transparency into every facet of the AI workflow and giving users the power to understand, question, and improve their digital teammates.

How to get the most out of your reasonable assistant: practical playbook

Step-by-step: onboarding your AI coworker

Seamless integration is more than a one-click install. It demands a structured approach, ongoing support, and clear alignment with business goals.

- Assess readiness: Audit your workflows, data quality, and team openness to change.

- Define success: Set measurable outcomes—like response times or error rates—that matter to your business.

- Select the right tool: Prioritize platforms with proven track records, robust support, and transparent policies.

- Pilot in one team: Choose a motivated group to test and refine the assistant.

- Customize workflows: Adjust settings, permissions, and triggers to fit your specific needs.

- Educate users: Provide hands-on training, not just manuals—empower power users to champion adoption.

- Monitor closely: Track KPIs, gather qualitative feedback, and catch issues early.

- Iterate fast: Tweak, retrain, and optimize based on real-world use—not theory.

- Scale with care: Only roll out broadly once you’ve ironed out the rough edges.

Miss a step, and you risk turning your reasonable assistant into a very unreasonable headache.

Checklist: is your team ready for an AI-powered enterprise teammate?

Before launching, use this self-assessment:

- Are workflows well-documented and repeatable? Ad-hoc processes doom AI rollouts.

- Is data clean and accessible? Garbage in, garbage out.

- Does leadership champion change? Passive support isn’t enough.

- Are end users involved early? Ground-level feedback beats top-down mandates.

- Is there a plan for ongoing training? Skills decay quickly in fast-moving environments.

- Is transparency prioritized? If users can’t see how the AI works, they won’t trust it.

- Are clear escalation paths in place? When things go wrong, who fixes it?

Teams that can answer “yes” to most of these questions are primed for success.

Power-user tips: going beyond the basics

Once your reasonable assistant is up and running, next-level gains come from advanced tactics:

- Leverage workflow automations: Chain tasks, set triggers, and explore integrations—don’t settle for “set and forget.”

- Monitor for drift: Regularly check that the AI’s outputs still align with evolving business needs.

- Use analytics dashboards: Data-driven insights reveal bottlenecks and improvement opportunities.

- Solicit wide feedback: Tap diverse voices—across teams, levels, and roles—to surface blind spots.

- Connect with communities: Forums and user groups (like those around futurecoworker.ai) offer tips, workarounds, and new use cases.

In the fast-evolving landscape of enterprise AI, those who master advanced features solidify a true competitive edge.

The future of reasonable assistants: where are we headed?

Emerging trends in AI coworker technology

Current trends reveal a shift from generic assistants to domain-specific digital teammates—AI tools tailored for finance, healthcare, marketing, and beyond. Transparency, explainability, and human-in-the-loop controls are emerging as new table stakes.

| Feature/Capability | Today’s Reasonable Assistants | Next-Gen Prototypes | Key Practical Differences |

|---|---|---|---|

| Email management | Automated categorization | Predictive action planning | Context-aware recommendations |

| Scheduling | Calendar integration | Intent-based suggestions | Cross-team coordination |

| Task tracking | Status updates | Adaptive prioritization | Real-time reprioritization |

| Summarization | Basic text extraction | Semantic, intent-aware | Contextual, actionable summaries |

| Decision support | Limited cues | Explainable AI | Transparent rationale, user override |

Table 4: Today’s vs. next-gen reasonable assistant features.

Source: Original analysis based on IBM AI Trends 2024-2025 and enterprise case studies.

Cross-industry impacts: from finance to creative fields

AI assistants aren’t just for tech companies anymore. In banking, digital coworkers now flag compliance risks in real time. In healthcare, they coordinate patient communications with remarkable accuracy. Even creative agencies are harnessing AI for campaign ideation and rapid prototyping.

These applications aren’t just about efficiency—they’re reshaping what’s possible in fields from law to logistics, teaching businesses to see AI not as a replacement, but as an essential collaborator.

Are we ready for truly collaborative AI?

The journey toward the perfect reasonable assistant is ongoing. The best digital coworkers will always be a work in progress, demanding humility, vigilance, and a relentless focus on what actually matters for real teams in real offices.

“Collaboration isn’t about perfect answers—it’s about better questions.” — Morgan, innovation lead (quote based on industry trends)

Ultimately, the “reasonable assistant” isn’t a cure-all. It’s a challenge: to build smarter, more adaptive teams—human and machine—capable of thriving in the age of intelligent enterprise.

Supplementary: glossary, resources, and further reading

Key terms and concepts decoded

Navigating the world of AI coworkers means mastering a new vocabulary. Here’s your quick guide:

- Natural language processing (NLP): The tech that lets AI understand and generate human language. Example: summarizing your email thread.

- Supervised learning: Training AI using labeled data, so it learns to sort, categorize, or predict based on real examples.

- Digital teammate: An AI-powered assistant that operates alongside humans in collaborative workflows.

- Machine bias: Systematic errors in AI outputs, often reflecting the prejudices in training data or design.

- Human-in-the-loop (HITL): Workflow structures where humans supervise, override, or fine-tune AI decisions.

- Algorithmic accountability: The requirement for organizations to take responsibility for AI-driven outcomes.

- Explainable AI (XAI): Systems designed to make their logic and recommendations transparent to users.

- Context awareness: AI’s ability to adapt based on the surrounding situation, history, and user intent.

- Task automation: AI-driven execution of routine, repetitive tasks with minimal human oversight.

- Collaborative intelligence: The combined power of humans and AI working together, amplifying productivity and creativity.

Understanding these terms isn’t just academic—it’s critical for evaluating, integrating, and optimizing your next reasonable assistant.

Further reading and trusted resources

Staying current takes more than skimming headlines. For deep dives and ongoing updates, check out:

- Stanford HAI AI Index 2025 – Definitive annual report, rich with stats on AI adoption and incidents.

- IBM AI Trends 2024-2025 – Insightful analysis on enterprise AI best practices.

- Pew Research: Workers’ views of AI – In-depth look at how real employees are adapting.

- futurecoworker.ai – Authoritative, up-to-date resource for practical tips, case studies, and the latest in AI-powered collaboration.

These sources cut through the noise, helping you separate fact from fiction in the fast-moving world of intelligent enterprise teammates.

What you should ask before hiring a ‘reasonable assistant’

Before you commit to your next digital coworker, run through this due diligence checklist:

- What problem are we really solving? If you can’t articulate this, stop.

- Is the assistant compatible with our existing workflows? Integration headaches kill momentum.

- How transparent is the AI’s logic? Black boxes breed mistrust and risk.

- Who owns the data—and who sees it? Privacy and compliance must come first.

- What’s the training burden on staff? Hidden labor sinks adoption and ROI.

- How will we monitor and measure success? Define KPIs and track relentlessly.

- What’s our escalation protocol when things go wrong? Mistakes are inevitable—be ready.

- Does the vendor offer ongoing support and updates? Stale tools are liabilities.

Skip these questions, and you’re signing up for disappointment.

In the age of intelligent enterprise, the quest for a truly reasonable assistant is less about technology and more about grit, clarity, and a willingness to confront inconvenient truths. The organizations that thrive are those that treat AI coworkers not as miracle workers, but as imperfect, evolving teammates—valuable, but never flawless. If you’re ready to make the leap, let research, transparency, and humility be your guides.

Sources

References cited in this article

- Stanford HAI AI Index 2025(hai.stanford.edu)

- IBM AI Trends 2024-2025(ibm.com)

- Pew Research: Workers’ views of AI(pewresearch.org)

- Microsoft 2024 Work Trend Index(microsoft.com)

- Forbes: AI in Careers 2024(forbes.com)

- FAccT 2024 ACM Conference(dl.acm.org)

- Forbes: AI Dream Team(forbes.com)

- BBC: Panic and possibility(bbc.com)

- Full Stack AI: Top 10 AI Myths Debunked(fullstackai.co)

- Gartner: 6 AI Myths Debunked(gartner.com)

- Forbes: Debunking AI Myths(forbes.com)

- Morgan Lewis: AI in the Workplace(morganlewis.com)

- LSE Business Review(blogs.lse.ac.uk)

- ESADE: AI Regulation in the Workplace(dobetter.esade.edu)

- International Journal of Educational Technology in Higher Education(educationaltechnologyjournal.springeropen.com)

- PMC: Design as Quality Improvement(pmc.ncbi.nlm.nih.gov)

- IxDF: Principles of Design(interaction-design.org)

- INFORMS Strategy Science(pubsonline.informs.org)

- Tandfonline: AI and Decision-Making(tandfonline.com)

- PMC: AI-Assisted Decision-Making(pmc.ncbi.nlm.nih.gov)

- Deloitte: State of Generative AI in the Enterprise(www2.deloitte.com)

- Menlo Ventures: State of Enterprise AI(menlovc.com)

- McKinsey: The State of AI(mckinsey.com)

- BCG AI Adoption Survey(bcg.com)

- IBM Global AI Adoption Index 2023(newsroom.ibm.com)

- CIO Dive: AI Project Failure Rates(ciodive.com)

- Medium: 13 AI Disasters of 2024(medium.com)

- Forbes: The Year of AI and Tech Troubles(forbes.com)

- TechTarget: 15 AI Risks(techtarget.com)

- MIT Sloan Management Review: Human-Machine Collaboration(sloanreview.mit.edu)

- Cambridge Core: Human–Machine Collaboration(cambridge.org)

- PMC: Human-Centric Digital Twin(pmc.ncbi.nlm.nih.gov)

- Owl Labs State of Hybrid Work 2024(owllabs.com)

- Zoom: Workplace Collaboration Statistics(zoom.com)

- FlexOS: Hybrid Work Statistics(flexos.work)

- ScalableAgent: Enterprise AI Assistants(miloriano.com)

- Enterprise AI World: IPA Use Cases(enterpriseaiworld.com)

- WIRED: Get Ready for the Great AI Disappointment(wired.com)

- TechTarget: Expectation vs. Reality of Generative AI(techtarget.com)

- CNBC: The Gap Between AI Expectations and Outcomes(cnbc.com)

Ready to Transform Your Email?

Start automating your tasks and boost productivity today

More Articles

Discover more topics from Intelligent enterprise teammate

Real Estate Support in 2026: From Burnout Risk to AI Edge

Real estate support in 2026 is more than admin—discover hidden costs, expert hacks, and why AI teammates are changing the rules. Don’t settle for chaos—get ahead.

Real Estate Secretary or Strategist? the Role Quietly Driving Growth

Discover how this underestimated role is reshaping real estate teams in 2026. Uncover secrets, avoid pitfalls, and future-proof your agency now.

Real Estate Professional in 2026: Obsolete or Unfair Advantage?

Discover the hard realities, hidden benefits, and game-changing strategies for buyers, sellers, and aspiring pros—2026’s essential guide.

Real Estate Helper Vs Agent: Who Should Really Run Your Deals in 2026?

Discover insights about real estate helper

Real Estate Coordinator: the Hidden Force Behind Deals That Close

Discover the real impact, hidden benefits, and future trends of this essential role—plus actionable tips to master your next property transaction.

Real Estate Clerk in 2026: From Invisible Admin to Deal Maker

The myth of the real estate clerk as a mere paper pusher died a quiet death years ago—someone just forgot to send the memo. In 2025, the real estate clerk is

Real Estate Assistant 2026: Game‑changer or Hidden Liability?

Real estate assistant tools are changing the game in 2026. Discover the unfiltered truths, hidden risks, and big wins—plus how to choose the right AI teammate.

Real Estate Administrator in 2026: From Paperwork to Power Role

Discover insights about real estate administrator

Qualified Staff in 2026: Why Your ‘best Hires’ Keep Failing

Qualified staff are more than degrees—discover the brutal truth, hidden risks, and strategies to build teams that actually perform. Don’t hire blind—read this first.